Overview of five open-source LLMOps tools that improve observability, evaluation, deployment, and cost control for reliable production LLM workflows.

César Miguelañez

Managing large language models (LLMs) is challenging. Issues like unpredictable outputs, rising costs, and performance drops can harm businesses, costing them $1.9 billion annually. Open-source tools are stepping up to address these problems by improving monitoring, evaluation, deployment, and ongoing optimization of LLMs.

Here’s a quick look at five tools that stand out in the LLMOps space:

MLflow: Adds detailed tracking and observability to LLM workflows, helping teams debug and optimize performance.

Langfuse: Focuses on deep observability, tracking token usage, model behavior, and feedback for better evaluation.

BentoML: Simplifies deploying and scaling models with efficient packaging and high-performance serving.

Latitude: Improves reliability with a feedback-driven "Reliability Loop" to refine prompts and monitor errors.

OpenLLM: Offers OpenAI-compatible APIs for open-source models, making deployments straightforward.

Each tool targets specific challenges in LLMOps, offering cost savings, improved accuracy, and reduced errors. Whether you need better monitoring, faster deployment, or more reliable outputs, these tools can help streamline your workflows.

1. MLflow

MLflow adds a layer of observability to LLM workflows with its Tracing feature, which captures detailed execution data for each request. This helps tackle the unpredictability often associated with LLMs by giving developers the tools to identify bugs and unexpected behaviors in complex workflows. With over 20 million monthly downloads and 20,000 GitHub stars, MLflow has established itself as a key tool for teams handling intricate AI applications.

Observability Features

One of MLflow's standout features is its one-line autologging. By using a simple mlflow.<provider>.autolog() command, developers can enable automatic tracing across more than 20 GenAI libraries, including OpenAI, LangChain, LlamaIndex, and Anthropic. Additionally, MLflow integrates seamlessly with OpenTelemetry, allowing trace data to be sent to popular observability platforms like Grafana, Prometheus, Datadog, and New Relic. This enhanced visibility has enabled organizations to cut token spending by 30-50% by analyzing usage patterns more effectively.

Framework Integrations

MLflow supports both Python and JavaScript ecosystems, with Version 3.6 extending its reach to tools like Vercel AI SDK, LangChain.js, and LangGraph.js. These integrations help teams continuously refine their LLM operations. Moreover, the AI Gateway centralizes API key management and automatically traces every request, working seamlessly across providers like Anthropic, Google Gemini, Amazon Bedrock, and Azure OpenAI. Sam Chou, Principal Engineer at Barracuda, highlighted its impact:

"MLflow 3.0's tracing has been essential to scaling our AI-powered security platform. It gives us end-to-end visibility into every model decision, helping us debug faster, monitor performance, and ensure our defenses evolve as threats do".

Self-Hosting Support

MLflow is completely open source under the Apache 2.0 license and does not impose per-seat fees. Deployment is straightforward, thanks to a Docker Compose bundle that includes the tracking server, a PostgreSQL backend, and OTLP trace ingestion. For production use, the mlflow-tracing package offers a lightweight alternative with a 95% smaller footprint compared to the full MLflow package, reducing dependencies. These features make MLflow a powerful tool for managing LLMOps workflows efficiently.

2. Langfuse

Langfuse offers specialized observability for LLM workflows, tracking everything from token usage to model parameters and prompt–completion pairs. With more than 23,197 stars on GitHub and over 8,000 active instances every month, it has become a go-to choice for teams managing production AI systems. Its advanced observability features are designed to streamline and optimize LLM workflow monitoring.

Observability Features

Langfuse records structured logs for every request, capturing details like the prompt, response, token usage, latency, and any intermediate tool calls. These logs are then grouped into sessions, making it easier to follow multi-turn conversations [27,32]. The platform also visually maps agent workflows, showing logic flows and tool interactions in a clear, graphical format.

It includes features such as LLM-as-a-judge scoring, thumbs up/down feedback, and manual annotation queues for better evaluation [28,30]. A centralized prompt management system allows users to version, test, and deploy prompts. These prompts are directly linked to traces, enabling teams to monitor performance across iterations. Impressively, 90% of updates in production environments are written to the database within just 10 seconds.

Framework Integrations

Langfuse is designed to work seamlessly with a variety of frameworks, simplifying its integration into existing workflows. With support for over 50 libraries and frameworks, it works with tools like LangChain, LlamaIndex, the OpenAI SDK, and LiteLLM. The Python @observe() decorator captures function inputs, outputs, and metadata, ensuring detailed tracing. For LangChain users, the LangfuseCallbackHandler automates end-to-end tracing of chains, agents, and tool interactions with minimal code changes. Built on OpenTelemetry standards, Langfuse ensures compatibility with existing observability tools and avoids vendor lock-in.

Self-Hosting Support

Langfuse is fully open source under the MIT license [34,39], making it a flexible option for teams. It has seen over 5.5 million Docker pulls and 7 million SDK installs each month. Production deployments utilize Postgres, ClickHouse, Redis, and S3-compatible storage to handle trace data and support high-throughput analytics [29,31]. Thanks to its V3 architecture, Langfuse has dramatically reduced prompt API latency - from 7 seconds to just 100 milliseconds - through infrastructure upgrades and Redis caching. This self-hosted setup ensures reliable performance for LLMOps in demanding production environments.

3. BentoML

BentoML takes the complexity out of deploying machine learning models, offering a standardized and efficient way to move them into production. This open-source framework uses a unique packaging format called the "Bento" archive, which bundles together everything needed for deployment - source code, model references, and dependencies. Whether you're working with AWS, Azure, Google Cloud, or on-premises servers, BentoML ensures deployments remain consistent across environments [42,47].

Deployment Flexibility

BentoML is designed to fit into a variety of setups, from public clouds to on-premises and hybrid environments. Its BentoCTL tool simplifies integration with CI/CD pipelines by generating Terraform scripts. The results speak for themselves: Neurolabs reported that BentoML helped them reduce their time-to-market by nine months and cut compute costs by 70% in December 2025. Similarly, Yext, an enterprise search company, saw compute costs drop by 80–90% while achieving double the throughput after adopting BentoML's elastic scaling framework.

One standout feature is its autoscaler, which keeps GPU utilization above 70% while eliminating idle compute costs through a "scale-to-zero" capability. For Kubernetes deployments, BentoML significantly reduces cold start times by preloading model weights during the image build phase, a process that can otherwise take over 10 minutes [46,48]. By December 2025, Mission Lane was running 24 production services entirely powered by BentoML's CI/CD capabilities. This adaptability provides a strong backbone for managing models efficiently while integrating advanced tools and frameworks.

Framework Integrations

BentoML supports an extensive range of machine learning frameworks, including PyTorch, TensorFlow, Keras, Scikit-Learn, and XGBoost. For large language model (LLM) workflows, it integrates seamlessly with high-performance inference engines like vLLM and orchestration tools such as LangChain and LlamaIndex [45,50,44,48,49]. This means you can serve multiple models from different frameworks through a single endpoint. Its "Runners" feature adds another layer of efficiency by scaling model inference independently from the business logic [45,49].

In July 2022, Woongkyu Lee and the Financial Data Platform team at LINE used BentoML to build an MLOps platform integrated with MLFlow. This allowed data scientists to deploy models from various frameworks into production-ready APIs. Lee explained:

"BentoML can solve these problems by enabling backend engineers to focus on developing business logic, and data scientists to develop and deploy model APIs".

Self-Hosting Support

For teams that need control over their infrastructure and data, BentoML offers robust self-hosting options. Released under the Apache 2.0 license, it allows organizations to maintain complete oversight of their operations, a critical feature for workflows involving LLMs [51,53]. BentoML handles millions of requests daily across global organizations [45,42]. When paired with vLLM for production-scale LLM serving, it delivers nearly 8× higher throughput compared to similar setups. Teams can deploy on dedicated GPU servers or use Kubernetes for orchestration, with built-in Prometheus metrics to monitor performance [3,45,52].

4. Latitude

Latitude is an open-source AI engineering platform designed to improve the reliability of LLM-powered products in production. It supports a complete end-to-end LLMOps workflow by focusing on observability and continuous improvement through what it calls a "Reliability Loop." This process involves capturing production traffic, gathering human feedback, identifying failures, running regression tests, and automating prompt adjustments. Unlike tools that primarily handle deployment or model serving, Latitude emphasizes monitoring and iterative refinement.

Observability Features

Latitude uses OpenTelemetry to automatically capture detailed interaction data, including streaming information, without requiring manual setup. This includes inputs, outputs, metadata, and performance metrics. The platform's AI Gateway serves as a bridge between your application and AI providers, enabling smooth logging and allowing prompt updates or model changes without modifying application code.

Visual tracing maps every request's journey through spans and traces, helping engineers identify bottlenecks in areas like tool calls, database queries, or model completions. For streaming applications, Server-Sent Events (SSE) provide real-time visibility into tool calls and model interactions. These features have led to an 80% reduction in critical production errors and a 25% improvement in accuracy.

Latitude also offers Live Evaluations, which continuously analyze production logs and track quality metrics such as safety, helpfulness, and hallucination rates. Its Error Analysis feature automatically groups recurring failures, making it easier to identify common issues and monitor emerging problems. Performance monitoring includes metrics like latency (p50, p95, p99), throughput, token usage, and cost-per-request, with special attention to Time to First Token (TTFT) and Inter-Token Latency for streaming systems. All these capabilities integrate seamlessly with popular frameworks, simplifying LLM development.

Framework Integrations

Latitude works with widely used AI frameworks like LangChain, LlamaIndex, Haystack, DSPy, CrewAI, and the Vercel AI SDK. It also supports major model providers, including OpenAI, Anthropic, Google (Gemini/Vertex AI), Amazon Bedrock, Azure, Cohere, Mistral, and local models via Ollama. Official SDKs handle tasks like authentication, request formatting, and response parsing.

Teams can choose between two integration methods: using Latitude as an AI Gateway for automatic logging and tracing, or manually fetching prompts and uploading logs for complete infrastructure control. The platform's OpenTelemetry (OTLP) compatibility allows teams to export traces from their existing OpenTelemetry stacks to Latitude for centralized monitoring. Latitude's GEPA optimization method accelerates prompt iteration by up to 8x (Agrawal et al., 2025).

Self-Hosting Support

For teams that require full control over their infrastructure, Latitude provides flexible self-hosting options under the LGPL-3.0 license. A production-ready self-hosted setup includes components like a web application, API gateway, background job workers, WebSockets for real-time updates, PostgreSQL, Redis, and S3-compatible storage. Deployment options range from Docker Compose for single-server setups to container orchestration platforms like Kubernetes or ECS, as well as PaaS solutions like Heroku or Fly.io. Helm Charts are also available for Kubernetes deployments.

For production environments, using S3-compatible object storage (DRIVE_DISK=s3) and managed database services like RDS or ElastiCache is recommended for better scalability and persistence. Security measures include restricting access to database and Redis ports, using strong passwords, and storing API keys securely in Kubernetes Secrets or AWS Secrets Manager. Teams can disable anonymous usage data collection by setting OPT_OUT_ANALYTICS=true in their configuration.

5. OpenLLM

OpenLLM, created by the BentoML team, bridges the gap between local experimentation and scalable production deployment. It allows teams to serve open-source models - like Llama 3.3, DeepSeek R1, Qwen 2.5, Mistral, and Phi-4 - as OpenAI-compatible APIs almost instantly. The best part? If you're already using the OpenAI Python client, no code changes are needed - just update the base_url to your self-hosted OpenLLM instance.

Deployment Made Simple

OpenLLM doesn’t just make API integration straightforward; it also simplifies deployment across various environments. Using "Bentos", which are BentoML's standardized deployment units, it packages models for Docker and Kubernetes environments. Start testing locally on a laptop, then scale up to managed cloud platforms like BentoCloud for production workloads with autoscaling. OpenLLM also supports private model repositories, so enterprises can securely manage their fine-tuned models using the same tools. For gated models like Llama, you’ll need to export your Hugging Face token (HF_TOKEN) before serving, ensuring OpenLLM has access to the model repository.

Seamless Framework Integrations

Built on the BentoML framework, OpenLLM employs vLLM as its inference backend, ensuring production-grade performance while adding a management layer. This layer includes features like a Chat UI, API compatibility, and deployment packaging, making it easy to integrate with modern workflows. It works natively with Hugging Face, so any open-source model on the platform can be served effortlessly. Plus, with full OpenAI API protocol compatibility - including streaming and function calling - OpenLLM slots right into orchestration tools like LangChain and LlamaIndex as a direct alternative to OpenAI endpoints. On top of that, it offers robust monitoring tools to ensure stability in production.

Observability at Its Core

OpenLLM provides built-in observability tools to make monitoring and testing a breeze. A centralized dashboard via BentoCloud offers real-time monitoring, while the /chat endpoint includes a Chat UI for quick response testing. For production, features like autoscaling and enterprise-level deployment monitoring ensure a smooth transition from testing to reliable, scalable systems.

Flexible Self-Hosting Options

OpenLLM is open-source under the Apache-2.0 license, making it free to self-host. You can deploy it on a range of infrastructures using Docker or Kubernetes, or opt for one-click deployment to BentoCloud with a pay-as-you-go pricing model. This flexibility provides an excellent way to cut down on OpenAI costs. If an open-source model meets your needs, you can migrate without touching your existing code.

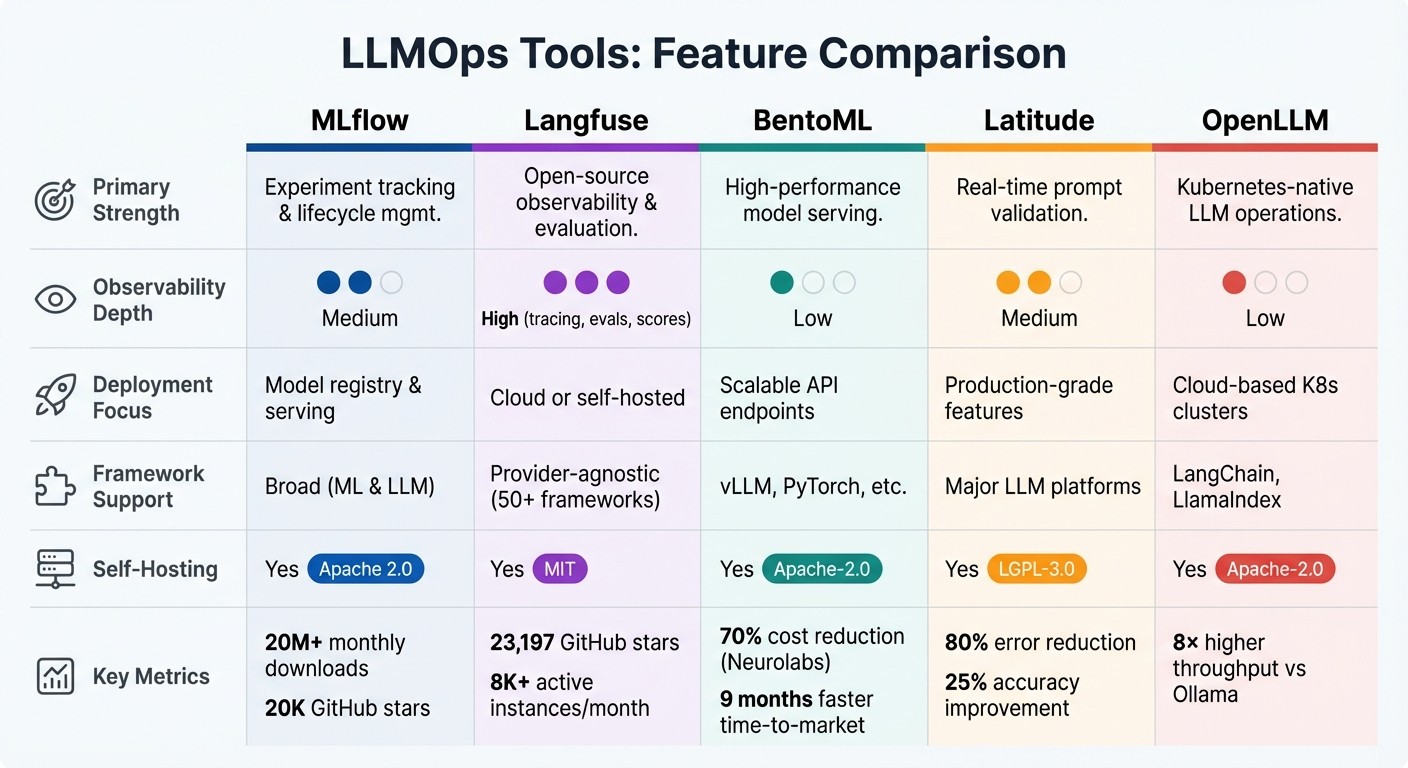

Comparison: Strengths and Weaknesses

LLMOps Tools Comparison: Features, Strengths, and Self-Hosting Options

Here's a breakdown of the key strengths and areas of focus for some leading LLMOps tools, based on the earlier discussion.

MLflow stands out for its capabilities in experiment tracking and lifecycle management, covering both ML and LLM workflows. It integrates seamlessly with popular IDEs, cloud platforms, and data science tools. Being open-source, its core features are free, with enterprise-level options offered through platforms like Databricks.

Langfuse is a go-to solution for open-source observability. It excels in deep tracing and supports over 50 frameworks. As noted by Tracia Blog:

"Langfuse's biggest draw is that it's open source... You can self-host it, audit the code, and keep all data on your infrastructure."

BentoML focuses on high-performance model serving, utilizing engines like vLLM to handle demanding workloads. It operates under the Apache-2.0 license, and costs are tied to the infrastructure it runs on - usually GPUs.

Latitude bridges the gap between technical teams and domain experts with its "Reliability Loop", which combines observability, prompt optimization, and human-in-the-loop feedback. According to user reports, adopting Latitude can reduce critical production errors by 80% and improve model accuracy by 25% within two weeks.

OpenLLM delivers Kubernetes-native operations with APIs compatible with OpenAI, achieving nearly 8× the throughput of localized solutions like Ollama on comparable hardware.

The table below summarizes the core features of each tool, including their focus areas, observability depth, and hosting options:

Tool | Primary Strength | Observability Depth | Deployment Focus | Framework Support | Self-Hosting |

|---|---|---|---|---|---|

MLflow | Experiment tracking & lifecycle mgmt | Medium | Model registry & serving | Broad (ML & LLM) | Yes |

Langfuse | Open-source observability & evaluation | High (tracing, evals, scores) | Cloud or self-hosted | Provider-agnostic (50+ frameworks) | Yes (MIT) |

BentoML | High-performance model serving | Low | Scalable API endpoints | vLLM, PyTorch, etc. | Yes (Apache-2.0) |

Latitude | Real-time prompt validation | Medium | Production-grade features | Major LLM platforms | Yes (LGPL-3.0) |

OpenLLM | Kubernetes-native LLM operations | Low | Cloud-based K8s clusters | LangChain, LlamaIndex | Yes (Apache-2.0) |

Your choice will depend on your specific requirements. For in-depth tracing and evaluation, Langfuse offers flexibility with its MIT-licensed platform. If collaborative prompt engineering and real-time validation are priorities, Latitude is a strong contender. On the other hand, those needing optimized high-throughput serving should explore OpenLLM or BentoML, which use advanced techniques like continuous batching and PagedAttention to make the most of GPU resources.

Conclusion

Creating a reliable LLM workflow is all about using the right tools for the right tasks. Instead of hunting for a single perfect solution, focus on building a modular pipeline tailored to your needs. Start by implementing strong observability practices to track production data and pinpoint failures. As OpenAI emphasizes:

"If you are building with LLMs, creating high quality evals is one of the most impactful things you can do. Without evals, it can be very difficult and time intensive to understand how different model versions might affect your use case."

An effective LLMOps strategy blends observability, prompt management, and high-performance serving to create sturdy AI systems ready for production. The key lies in closing the reliability loop: capturing real-world traffic, identifying failures using human-in-the-loop feedback, and running automated regression tests. Teams adopting this method have seen an 80% drop in critical errors and a 25% improvement in model accuracy in just two weeks. Considering that undetected LLM failures cost businesses nearly $1.9 billion annually, investing in the right mix of tools isn't just smart - it's necessary.

To further strengthen your pipeline, decouple prompts from your codebase and embed automated evaluations into your CI/CD process. This helps uncover subtle response issues before they escalate. Open-source tools provide flexibility, whether you choose to self-host for data security or opt for managed solutions starting at $299/month. By integrating the best tools into a cohesive system, you can transform unreliable AI systems into production-ready features that consistently deliver results.

FAQs

What’s the easiest way to start adding observability to an LLM app?

The simplest way to integrate observability into an LLM app is by leveraging tools such as OpenTelemetry, which provides support for metrics, traces, and logs. To get started, install OpenTelemetry, configure it for metrics collection and tracing, and use visualization tools like Grafana to analyze the data.

Additionally, platforms like Latitude offer built-in solutions that make it easy to monitor request metrics, capture traces, and evaluate model behavior with minimal manual effort. These tools streamline the process, saving time while delivering valuable insights.

How do I choose between monitoring, evaluation, and serving tools for LLMOps?

Choosing the right tools for LLMOps comes down to understanding what your workflow needs.

Monitoring tools keep an eye on system performance, resource usage, and latency, providing real-time insights to maintain smooth operations.

Evaluation tools focus on assessing key aspects like accuracy, bias, and safety, making sure your models are up to standard before they go live.

Serving tools handle model delivery, ensuring scalability and efficiency for seamless deployment.

Using these tools together creates a workflow that's dependable, efficient, and safe.

How can I cut token costs without hurting response quality?

Reducing token costs can be achieved through a mix of smart strategies, including:

Optimizing prompts: Craft concise and efficient prompts to get the desired output without unnecessary token usage.

Implementing caching: Store and reuse responses for repeated queries to avoid redundant processing.

Using fallback chains: Set up a system where simpler or smaller models handle initial requests, and only escalate to larger models when needed.

Choosing the right model: Opt for models that strike a balance between size and quality, such as smaller or fine-tuned models, which can deliver strong results at a lower cost.

These approaches ensure you maintain high-quality responses while keeping expenses in check.