Human-in-the-loop review catches cultural and subtle biases machines miss, enabling continuous, measurable AI fairness improvements.

César Miguelañez

AI systems often reflect biases from their training data, which can lead to unfair decisions or outputs. Automated tools alone can't fully address these issues because they miss subtle nuances like tone or context. This is where Human-in-the-Loop (HITL) feedback becomes critical. Experts review AI outputs, identifying biases that machines overlook, such as cultural misinterpretations or gender stereotypes.

Key Takeaways:

Human Review Matters: Automated metrics like BLEU or ROUGE often fail to detect deeper issues like sarcasm or stereotyping.

Diverse Reviewer Panels: Include individuals from varied backgrounds to catch biases across demographics.

Structured Feedback Loops: Use tools to collect, annotate, and analyze human feedback systematically.

Bias Mitigation Techniques: Combine human insights with methods like data resampling, adversarial testing, and post-processing for better results.

For example, Salus AI improved its accuracy from 80% to nearly 100% in marketing calls by integrating expert feedback, while Stanford Health Care achieved 81% alignment with clinical standards by validating outputs with physicians. Addressing bias isn’t a one-time fix - it requires continuous human oversight and evaluation to ensure equitable AI systems.

How Human Feedback Helps Reduce Bias in AI

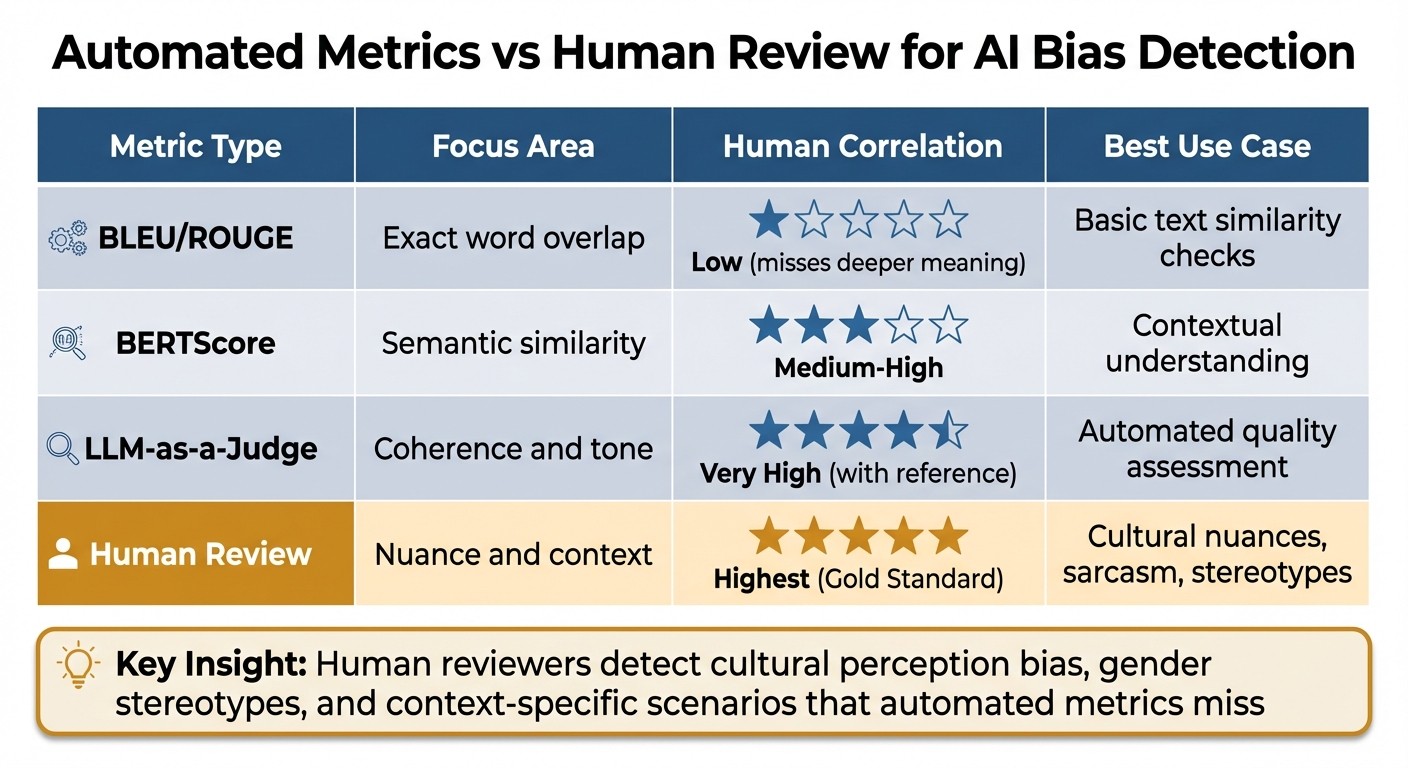

Comparison of AI Bias Detection Methods: Automated Metrics vs Human Review

What is Human-in-the-Loop Feedback?

Human-in-the-Loop (HITL) feedback takes bias detection to the next level by involving human reviewers to evaluate subtleties that automated systems often miss. While algorithms rely on statistical patterns to assess AI models, human reviewers bring in the ability to interpret context and nuance. This is especially crucial for multimodal AI systems that handle text, images, audio, and video - domains where understanding cultural nuances and context is essential.

One major benefit of HITL feedback is its ability to assess context-specific scenarios that automated metrics can't interpret. For example, human reviewers can detect "opposite valence" in art emotion classification. This refers to situations where one culture might see a piece of art as expressing positive emotion, while another interprets it negatively. Similarly, they can notice when a model performs well with Western imagery and concepts (like North American household objects) but struggles with Eastern ones (such as Chinese cultural elements).

These insights are critical because automated metrics, no matter how advanced, often fail to capture the full range of bias present in AI systems.

Why Automated Metrics Miss Important Biases

Automated systems typically evaluate AI models based on statistical patterns and word overlap using tools like BLEU or ROUGE. However, these methods often fall short when it comes to understanding deeper meaning, tone, or subtle cultural cues. Even more sophisticated measures, such as BERTScore - which looks at semantic similarity - can miss nuances like sarcasm, ambiguous social contexts, or culturally specific interpretations that human evaluators can easily pick up.

Cultural perception bias is a clear example of where automated metrics fall short. Studies show that Western audiences tend to focus on the central figures in an image, while Eastern audiences pay more attention to the overall scene context. Models trained predominantly on Western data may fail to account for these differences, leading to outputs that unintentionally favor one cultural perspective over another. Similarly, automated systems can misinterpret an assertive tone as factual accuracy, even when the reasoning is biased.

Gender bias is another area where automated systems struggle. For instance, they often reinforce stereotypes by associating certain professions with gender-specific pronouns. Identifying and addressing these biases requires human reviewers who can evaluate language in context and flag harmful stereotypes, helping to ensure AI outputs are more equitable.

Human reviewers also excel in adapting to new or unexpected data, such as evolving cultural slang or emerging social trends. Automated systems, on the other hand, may require retraining to handle such changes. This flexibility makes human feedback essential for creating AI systems that are fair and inclusive for diverse populations.

The table below highlights the strengths of human review compared to automated metrics:

Metric Type | Focus Area | Human Correlation |

|---|---|---|

BLEU/ROUGE | Exact word overlap | Low (misses deeper meaning) |

BERTScore | Semantic similarity | Medium-High |

LLM-as-a-Judge | Coherence and tone | Very High (with reference) |

Human Review | Nuance and context | Highest (Gold Standard) |

How to Collect and Structure Human Feedback for Bias Detection

Building a Diverse Reviewer Panel

The first step in effective bias detection is assembling a reviewer panel that mirrors the diversity of your AI system's users. This diversity should go beyond basic factors like race and gender. Include individuals from different socioeconomic backgrounds, geographic regions, and language communities. A broad range of perspectives helps uncover biases that might otherwise go unnoticed.

Reviewers with varied experiences and contexts can interpret language, culture, and professional nuances differently, which is essential for identifying subtle biases.

"Human evaluation metrics are essential for capturing nuanced biases that quantitative metrics may miss." - Conor Bronsdon, Head of Developer Awareness, Galileo

Setting Clear Bias Criteria

To ensure consistent evaluations, reviewers need well-defined guidelines. Create specific bias criteria to help them assess AI outputs systematically. For example, you could use three categories:

Aligned: The response fully meets expectations without any bias.

Partially Aligned: The response contains minor issues or subtle stereotypical elements.

Not Aligned: The response shows clear bias or harmful content.

These categories should cover both overt and subtle biases. For complex cases, decision trees can guide reviewers through tricky scenarios, such as outputs that unintentionally reinforce stereotypes. This ensures consistent and actionable feedback across all reviewers and evaluation cycles.

Tools for Annotating Multimodal Outputs

When dealing with text, images, audio, and video, having the right annotation tools is crucial. Platforms like Latitude offer a Human-in-the-Loop framework that simplifies the process. Reviewers can examine logs, assign binary pass/fail ratings, provide numerical scores, and leave detailed natural language feedback through both the user interface and SDK.

Structured feedback systems like these allow for scalable insights through quantitative ratings, while iterative reviews help refine specific bias patterns. Combining these approaches ensures that human feedback is effectively integrated into the ongoing improvement of AI models.

Using Latitude to Integrate Human Feedback into AI Workflows

Monitoring Model Behavior and Collecting Feedback

Latitude offers a structured way to monitor AI models with two evaluation modes: Live Mode for real-time tracking and Batch Mode for regression testing using predefined datasets. It organizes outputs by type - such as text, images, or tool calls - making it easier to manage complex systems.

To address bias-related issues, the platform uses criteria like "toxicity" and "bias" as negative flags. This encourages the system to aim for lower scores in these areas. Feedback collection can be automated with Latitude's SDK, which allows teams to upload logs and annotate outputs in real time. Activating "Live Evaluation" for safety metrics ensures problematic outputs are flagged immediately.

This systematic approach lays the groundwork for an in-depth analysis of bias in real-world scenarios.

Finding Bias Patterns Through Data Slicing

Latitude builds on real-time feedback by slicing collected data to identify bias patterns. Production logs are transformed into structured datasets, helping uncover issues across different demographic groups and contexts. Negative evaluations for undesirable traits guide the system to optimize performance in specific areas, making it easier to pinpoint where the model struggles. With live monitoring, teams can quickly detect performance drops or emerging biases as new logs are added.

Improving Models Through Repeated Evaluation

Latitude employs a six-stage Reliability Loop - Design, Test, Deploy, Trace, Evaluate, and Improve - to continuously reduce bias. Teams work with "golden datasets" that include diverse inputs and edge cases, updating these datasets with examples from production logs to keep up with evolving user behavior. The platform supports both batch evaluations for regression testing and live evaluations for immediate feedback. This combination ensures that new updates don't reintroduce old biases while also catching new issues as they appear in production.

Combining Human Feedback with Automated Methods

Pairing human feedback with automated techniques creates a powerful combination, rather than relying solely on one approach. This blend allows human judgment to uncover nuanced problems while automation ensures consistency and scalability. Teams from various disciplines use these combined insights throughout the AI lifecycle - from selecting training data to fine-tuning final outputs.

It's essential to determine when human input or automation is more effective. Automated tools are great for handling large datasets and applying consistent rules, but they often miss subtle biases that require domain expertise or cultural understanding. Human reviewers, on the other hand, excel at catching these subtleties and guiding automated systems toward better outcomes.

Using Human Feedback to Guide Data Resampling

Human annotators play a critical role in identifying biased patterns within model outputs, which can then guide data resampling strategies. For instance, if reviewers flag underrepresented demographic groups, teams can adjust the dataset by oversampling minority groups or undersampling overrepresented ones to achieve balance . In healthcare AI, diverse annotators have pointed out gaps in representing certain patient demographics. This feedback has led to stratified sampling methods that ensure proportional representation across factors like age and gender.

Counterfactual augmentation is another tool that reduces gender bias by 15–20% in fairness metrics. Human reviewers identify causal biases, prompting the creation of counterfactual examples. For example, they might adjust a sensitive attribute like gender in a text prompt without altering its overall context . Additionally, MIT's TRAK technique automates the process of identifying harmful training data points by analyzing model predictions on minority subgroups. Human feedback prioritizes problematic predictions, helping refine the approach.

Using Adversarial Testing to Reduce Bias

Adversarial testing builds on data resampling by challenging AI outputs with intentionally designed inputs that expose biases. For instance, reviewers might use prompts embedding stereotypes to test a language model. These evaluations help prioritize which biases need immediate attention . Diverse panels apply clear criteria to rank and validate biases. A practical example includes testing a multimodal AI system with adversarial images of professionals, varying by gender and ethnicity. Human reviewers have flagged issues like lower confidence scores for women in tech roles, prompting targeted retraining of the model.

Adversarial networks further reduce bias by training models to avoid predicting sensitive attributes while maintaining performance. Human feedback refines these adversaries through iterative evaluations. Studies show that combining this method with prejudice remover regularizers can cut discrimination in hiring AI systems by 25%.

After adversarial testing, post-processing ensures even finer adjustments to model fairness.

Applying Post-Processing for Fairer Outputs

Post-processing complements resampling and adversarial testing by fine-tuning fairness in model outputs. This involves adjusting results after they are generated, with human feedback validating fairness constraints. For example, human evaluators review prediction distributions across demographic groups to guide threshold adjustments or histogram binning, ensuring equitable treatment. In recommender systems, annotators may identify imbalances - such as gender-skewed job search results. This feedback leads to post-processing filters that reorder results to improve proportionality while keeping relevance intact. As a result, visibility for underrepresented groups can increase by 30%.

Metrics like demographic parity and equalized odds track effectiveness, with human validation showing an 18% reduction in bias without sacrificing accuracy . In areas like hate speech detection, post-processing with human-audited screeners ensures fairness and scalability after automated predictions.

Measuring How Human Feedback Reduces Bias

To understand the impact of human feedback on reducing bias, it’s essential to measure its effects using specific metrics. This involves analyzing performance across different demographic groups and validating improvements through ongoing evaluations.

Tracking Performance Across Demographic Groups

Performance slice analysis is a key method for assessing accuracy and bias across various demographic segments. It helps determine whether your model treats all groups equitably or shows favoritism toward certain populations.

For instance, using causal interventions can help break connections between biased inputs and outputs. A noteworthy example is the MOMA (Multi-Objective approach within a Multi-Agent framework), which achieved a reduction in bias scores by up to 87.7% on the BBQ dataset while maintaining accuracy. Similarly, test execution feedback significantly lowered GPT-4's code bias ratio from 59.88% to 4.79%. Metrics like these, tracked across dimensions such as age, gender identity, race/ethnicity, disability status, and socioeconomic status, provide critical benchmarks. Datasets like BBQ and StereoSet serve as valuable references for measuring these improvements.

These insights directly feed into refining AI workflows, reinforcing the importance of a human-in-the-loop approach. Regular tracking of these metrics ensures that bias mitigation strategies continue to evolve and improve.

Validating Improvements Through Multiple Evaluation Rounds

Single evaluations aren’t enough to ensure lasting results. Bias can reappear when models encounter fresh data or unusual scenarios, making repeated evaluations a necessity. Ongoing assessments create a feedback loop, turning production issues into actionable refinements through continuous monitoring in Live Mode and batch testing.

By enabling "Live Evaluation" for safety and bias metrics, you can observe production traffic in real time. This allows for early detection of performance regressions, minimizing their impact on users. While batch testing with "Golden Datasets" is effective for regression checks, live monitoring evaluates new logs as they are generated.

Regular evaluations should be conducted after each model update, once sufficient new human feedback has been collected, or on a set schedule, such as monthly. Each evaluation should compare current performance to previous baselines across all demographic groups, ensuring that addressing one bias doesn’t unintentionally create another. This iterative process is key to sustaining meaningful improvements over time.

Conclusion and Key Takeaways

Reducing bias in AI systems requires human judgment because automated metrics often miss subtle details. Human-in-the-loop feedback is especially crucial for catching biases in multimodal outputs where context and cultural subtleties play a significant role. To address this, it's essential to assemble a diverse panel of reviewers, establish clear criteria for identifying bias, and implement structured processes to transform feedback into actionable improvements.

Combining human expertise with automation helps uncover bias patterns and enables scalable solutions like data resampling, adversarial testing, and post-processing. It's equally important to monitor performance across different demographic groups - such as age, gender identity, race, and disability status - to ensure that bias corrections don't inadvertently introduce new issues.

The most effective teams approach bias reduction as an ongoing effort, not a one-time fix. Regular evaluations and live monitoring are key to catching regressions and validating improvements, especially as models encounter new edge cases. This continuous feedback loop lays the groundwork for structured workflows that adapt over time.

Latitude provides a robust framework to implement these workflows efficiently. Its Reliability Loop - which includes Design, Test, Deploy, Trace, Evaluate, and Improve - transforms production failures into meaningful refinements. The platform blends human feedback with automated evaluations and programmatic rules, offering multiple perspectives on fairness. Tools like Negative Evaluations allow teams to specifically target and reduce bias, while the AI Gateway ensures every model interaction is tracked, creating a transparent audit trail.

FAQs

When do I need human reviewers instead of automated metrics?

Human reviewers play a key role in tackling complex challenges such as bias, hallucinations, or unclear outputs. While automated metrics are great for quick, surface-level assessments, they often fail to catch finer details like tone, logical flow, or sensitivity to different perspectives. Human feedback is essential for improving prompts, spotting biases, and promoting fairness - providing insights that automated systems struggle to deliver, particularly in nuanced or subjective situations.

How do I choose a diverse HITL reviewer panel for my users?

When building a Human-in-the-Loop (HITL) reviewer panel, it's crucial to ensure diversity to capture a wide range of perspectives. Start by systematically monitoring outputs for any signs of bias or variability. This step helps identify areas where diverse viewpoints can make a difference.

To achieve this, include reviewers from a mix of demographic and social backgrounds. Aim for diversity in age, gender, ethnicity, and cultural experiences. A panel with varied perspectives is better equipped to spot and address issues that a more uniform group might overlook.

Additionally, using structured workflows to gather and analyze human feedback is key. These workflows make it easier to identify representative reviewers while also improving bias detection. By doing this, you can highlight and address potential blind spots that might otherwise go unnoticed.

How can I keep bias from coming back after model updates?

To keep bias from creeping back in after updates, it's essential to use ongoing feedback and evaluation methods. Keep an eye on outputs, identify any biases, and adjust training data or tweak model parameters when necessary. Make it a routine to validate prompts and assess bias using tools like fairness metrics or expert reviews. Platforms such as Latitude allow teams to collaborate with domain experts, helping to tackle biases dynamically during updates and maintain fairness and accuracy over time.