Human reviewers refine prompts to boost clarity, accuracy, and engagement using iterative feedback, evaluation metrics, and collaborative workflows.

César Miguelañez

Large language models can generate impressive results, but consistent quality often requires human feedback. Automated systems adjust parameters quickly, but they miss nuances like tone, relevance, and logic. Human reviewers provide critical insights that algorithms can't measure, improving prompt clarity, accuracy, and user engagement. Research shows that integrating human feedback boosts performance - examples include raising AI accuracy from 80% to 95-100% in compliance tasks and increasing user engagement metrics like click-through rates and retention.

Key takeaways:

Clarity: Human reviewers refine vague or overly complex prompts for better outputs.

Accuracy: Iterative feedback loops identify and fix errors, improving precision.

Engagement: Tailored prompts aligned with user needs lead to better outcomes.

Techniques: methods like role assignments and few-shot examples, and structured evaluation metrics make feedback-driven refinement systematic and effective.

Platforms like Latitude streamline these workflows, combining observability, collaborative feedback, and automated testing to refine prompts efficiently. The future of prompt optimization lies in balancing automation with expert input to maintain high-quality results. Exploring viral LLM tools can provide further inspiration for implementing these optimization workflows.

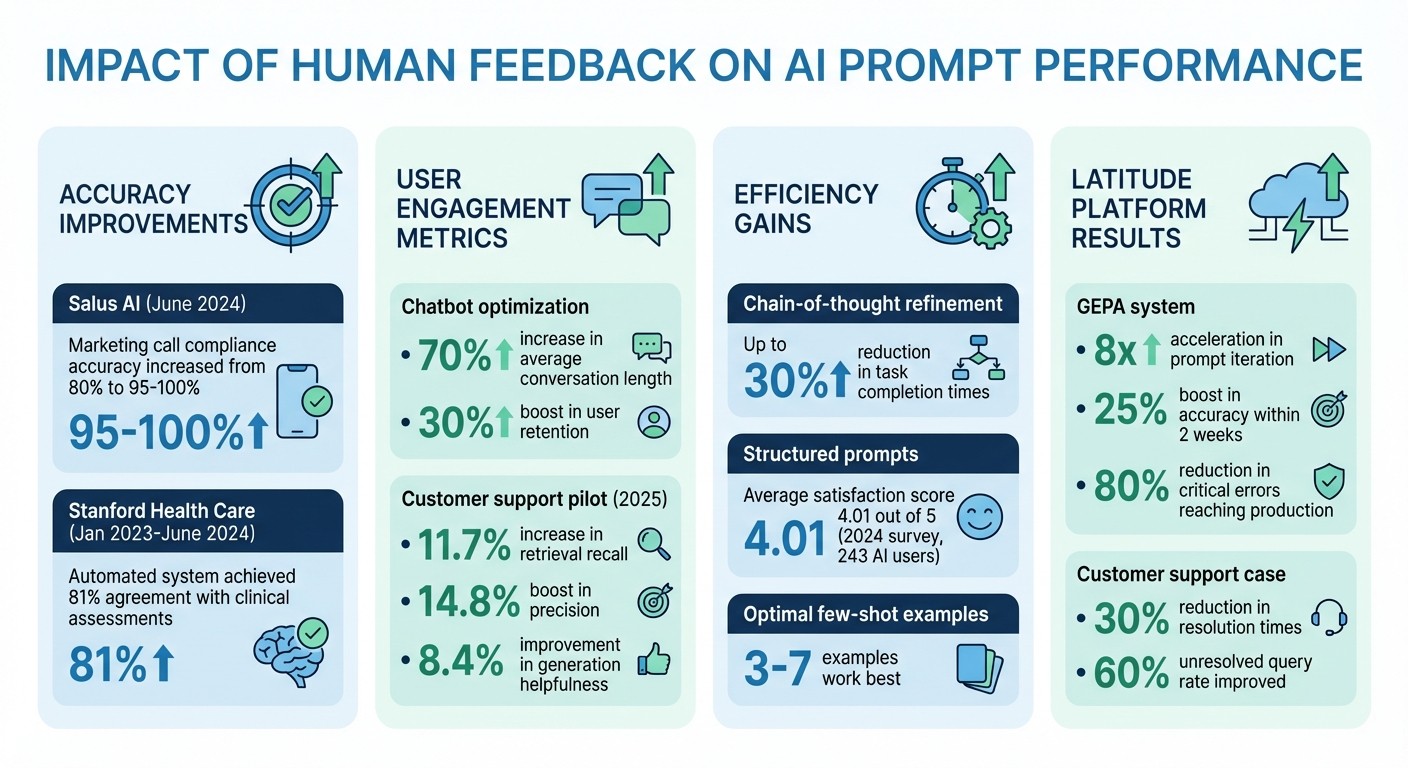

Impact of Human Feedback on AI Prompt Performance: Key Statistics and Results

How Human Feedback Improves Prompt Quality

Human feedback plays a crucial role in shaping prompt design by enhancing clarity, improving accuracy, and boosting user engagement. These improvements stem from insights that automated systems alone can't fully achieve.

Refining Prompt Clarity and Specificity

Clear prompts lead to better outputs. Human reviewers excel at identifying vague instructions and suggesting specific adjustments to align results with intended goals. A powerful method is role assignment, where the model is directed to "Act as a financial analyst" or "Respond as a compliance officer." This technique helps focus the model's responses, tailoring tone and depth to fit particular contexts.

"Prompt engineering is about conditioning them for desired outputs." - Vince Lam

Striking the right balance in prompts is another area where human feedback excels. If prompts are too vague, the model might produce irrelevant results; if they're overloaded with jargon, the output can become confusing. Experts guide this balance by specifying structures like "Use bullet points" or simplifying overly complex language. This ensures prompts are both precise and easy to follow.

Improving LLM Accuracy Through Iterative Feedback

Accuracy improves through a process of trial, analysis, and refinement. Experts review outputs, identify where they fall short, and tweak prompts to address these gaps. This structured feedback loop turns prompts into highly effective tools.

For example, in June 2024, Salus AI applied this method to enhance marketing call compliance for premium health screenings. By refining prompts and incorporating retrieval-augmented generation based on expert feedback, they increased accuracy from 80% to an impressive range of 95% to 100%. Similarly, a chatbot team used repeated feedback to optimize their reward model, achieving a 70% increase in average conversation length and a 30% boost in user retention.

"Effective LLM prompting is an iterative process. It's rare to get the perfect output on the first try." - Peter Hwang, Machine Learning Engineer, Yabble

As these refinements take hold, the result isn't just better accuracy - it’s also a noticeable improvement in user satisfaction and task success.

Increasing User Engagement and Task Success Rates

Refined prompts don’t just improve technical performance; they also deliver tangible business results. When prompts are better aligned with user needs, engagement naturally increases. Between January 2023 and June 2024, researchers at Stanford Health Care, led by Dr. François Grolleau, validated the MedFactEval framework using expert feedback from seven physicians. This process established a reliable ground truth for discharge summaries, leading to an automated system that achieved 81% agreement with clinical assessments.

In a 2025 U.S.-based customer support pilot, a collaborative "Agent-in-the-loop" framework allowed support agents to provide real-time feedback on knowledge relevance. This approach led to an 11.7% increase in retrieval recall, a 14.8% boost in precision, and an 8.4% improvement in generation helpfulness. These improvements translated directly into faster issue resolution and higher customer satisfaction.

Techniques for Feedback-Driven Prompt Optimization

Improving prompts through human feedback involves practical, step-by-step methods. The best strategies combine structured testing with measurable outcomes, ensuring prompts are fine-tuned to consistently deliver better results.

By leveraging the advantages of human feedback to enhance prompt clarity and precision, the following methods outline how systematic approaches can refine prompts through ongoing, iterative improvements.

Iterative Feedback Loops and Chain-of-Thought Refinement

Iterative feedback loops involve testing prompts, collecting human evaluations, and refining them based on those insights. This cycle of adjustments transforms basic prompts into highly effective tools. For example, a 2024 survey of 243 AI users found that those employing structured prompts reported an average satisfaction score of 4.01 out of 5, highlighting the direct impact of iterative refinement.

Chain-of-thought (CoT) refinement builds on this by guiding models to outline their reasoning step-by-step. Instead of a simple request like "Write a Python function for sorting", a CoT prompt might say: "Step 1: Identify input type. Step 2: Choose algorithm. Step 3: Implement and test." Human reviewers then evaluate the logic and completeness of each step, providing feedback to improve the next iteration. This method has been shown to cut task completion times - such as coding or report writing - by up to 30%.

Few-Shot Examples and Role Assignment

Few-shot examples, when paired with role assignment (e.g., "You are a helpful support agent"), help guide models by providing a mix of positive, negative, and edge-case scenarios. Selecting a diverse set of examples is key, and human feedback can rank their effectiveness. Research indicates that 3-7 examples typically work best for most tasks, while exceeding 10 examples often leads to diminishing returns and unnecessary token usage.

This approach has proven effective in practical settings. For instance, customer support chatbots improved significantly when teams combined role assignments with few-shot examples of empathetic responses. Human reviewers then scored these interactions, following a process similar to the PLHF (Prompt Learning from Human Feedback) framework. This iterative refinement led to measurable gains in response quality on industrial datasets.

Using Evaluation Metrics for Feedback Integration

Structured metrics help translate subjective feedback into actionable improvements. Commonly used metrics include accuracy scores, relevance ratings (e.g., 1-5 scales), coherence checks, and task success rates. These metrics pinpoint areas where prompts need fine-tuning, making the refinement process more systematic.

The PLHF framework exemplifies this approach. In 2024 experiments using public and industrial datasets, human experts scored input-output pairs. An evaluator model was trained to replicate these preferences, achieving greater accuracy than traditional SVM baselines. This evaluator then autonomously graded and refined prompts, significantly improving quality while reducing the need for ongoing human oversight. The framework operates with linear human feedback relative to training samples, making it practical for environments where continuous human review is limited.

Evaluation Type | Best For | Scalability |

|---|---|---|

LLM-as-Judge | Tone, creativity, helpfulness | Moderate |

Programmatic Rules | JSON schema, regex, length checks | High |

Human-in-the-Loop | Creating golden datasets, high-stakes review | Low |

These techniques provide a strong foundation for monitoring and improving LLM behavior in real-world applications, ensuring prompts remain effective and adaptable over time.

Using Latitude to Streamline Feedback Workflows

Latitude takes the concept of human feedback and transforms it into a structured, production-ready system for refining prompts. By combining observability, collaboration, and iterative improvement, this open-source platform simplifies the process of enhancing AI-driven workflows.

Observing and Debugging LLM Behavior

Latitude’s AI Gateway acts as a middleman between your application and AI providers, automatically logging all inputs, outputs, and metadata without requiring extra setup. The platform breaks down operations into traces (the entire workflow) and spans (specific actions like database queries or tool calls), helping teams pinpoint issues. For instance, when a customer support chatbot struggled with a 60% unresolved query rate, Latitude’s logs revealed that overly generic prompts were the culprit. Adjusting these prompts cut resolution times by 30%.

The Interactive Playground allows engineers to simulate production scenarios with real inputs, making it easier to diagnose problems like hallucinations or misalignment. Meanwhile, Live Mode keeps an eye on production logs in real time, catching performance drifts or safety concerns as they arise. Research confirms that iterative feedback can significantly improve prompt performance.

Collaborative Feedback Collection and Evaluation

Latitude enables shared workspaces with role-based permissions, allowing product managers, engineers, and domain experts to collaborate on prompt improvements. Teams can annotate outputs with thumbs up/down ratings, Likert scales, or detailed comments directly within the platform. This setup mirrors the "human-in-the-loop" approach, which has been shown to be effective for refining prompts.

The platform also integrates various evaluation methods - LLM-as-Judge, Programmatic Rules, and Human-in-the-Loop - into a single workflow. By automating metric calculations alongside human feedback, Latitude generates comprehensive reports that translate insights into actionable changes. In one example, an e-commerce team evaluated 50 prompt variations collaboratively, identifying top performers that enhanced user engagement by focusing on specificity. This approach aligns with results seen in PLHF frameworks applied to industrial datasets.

Continuous Improvement with Iterative Workflows

Latitude’s Guided Exploratory Prompt Adjustment (GEPA) system takes iterative workflows to the next level by automatically testing prompt variations against real-world evaluations. Teams using GEPA have reported an 8x acceleration in prompt iteration and a 25% boost in accuracy within just two weeks. The platform also supports A/B testing, version control for prompts, and deployment gates tied to metric thresholds, creating a smooth cycle of observe → feedback → refine → deploy.

Live Evaluations continuously scan production logs, while version histories with diff comparisons allow teams to track changes and quickly roll back if needed. This structured workflow has led to an 80% reduction in critical errors reaching production. With integrations for over 2,800 tools, including Slack and PagerDuty, Latitude ensures that feedback workflows can scale effectively, even for teams managing thousands of interactions daily.

Conclusion

Key Takeaways

Human feedback transforms prompt engineering from a trial-and-error approach into a structured, repeatable process. By combining measurable data with expert evaluations, teams can refine prompts in a way that aligns AI outputs with practical needs. This systematic approach enhances prompt clarity, improves the accuracy of language models, and boosts user engagement. The results? Tangible improvements like fewer errors and quicker issue resolutions.

The Future of Feedback-Driven Prompt Engineering

Looking ahead, the focus shifts to increasing automation without losing the essential role of human oversight. Feedback-driven methods are evolving toward automated evaluation systems that work hand-in-hand with human expertise. Platforms like Latitude are leading this transformation by offering tools for observability, collaborative feedback collection, and continuous optimization in a single streamlined process. As AI systems grow in scale, the challenge isn't just collecting feedback - it's about processing it effectively while maintaining high standards. Tools like semantic versioning (MAJOR.MINOR.PATCH) provide a practical way to track and manage prompt updates. The future belongs to teams that find the right balance between automation and human judgment, creating flexible feedback systems that adapt as their AI products develop.

FAQs

When is human feedback worth the cost?

Human feedback plays a critical role in enhancing the accuracy, safety, and relevance of large language models (LLMs), particularly for complex or sensitive tasks. It helps tackle challenges such as bias, inaccuracies, and system failures, especially during the refinement and validation stages. In high-stakes fields like healthcare or safety-critical systems, the advantages - improved performance, increased safety, and greater trust - often justify the investment, making it an essential part of the process.

What should reviewers score in prompt evaluations?

Reviewers should assess prompts by focusing on five key aspects: clarity, relevance, coherence, accuracy, and task alignment. This approach guarantees meaningful feedback and helps refine prompts to perform better over time.

How do you scale feedback without slowing releases?

You can manage feedback effectively without slowing down releases by relying on iterative and automated methods like A/B testing. This approach enables real-time evaluations and gradual adjustments. Techniques like automated prompt optimization and engineering turn prompt refinement into a measurable, streamlined process, cutting down on manual labor. By blending these tools with human oversight, such as consistent monitoring, you can ensure feedback is implemented while keeping deployment cycles fast and efficient.