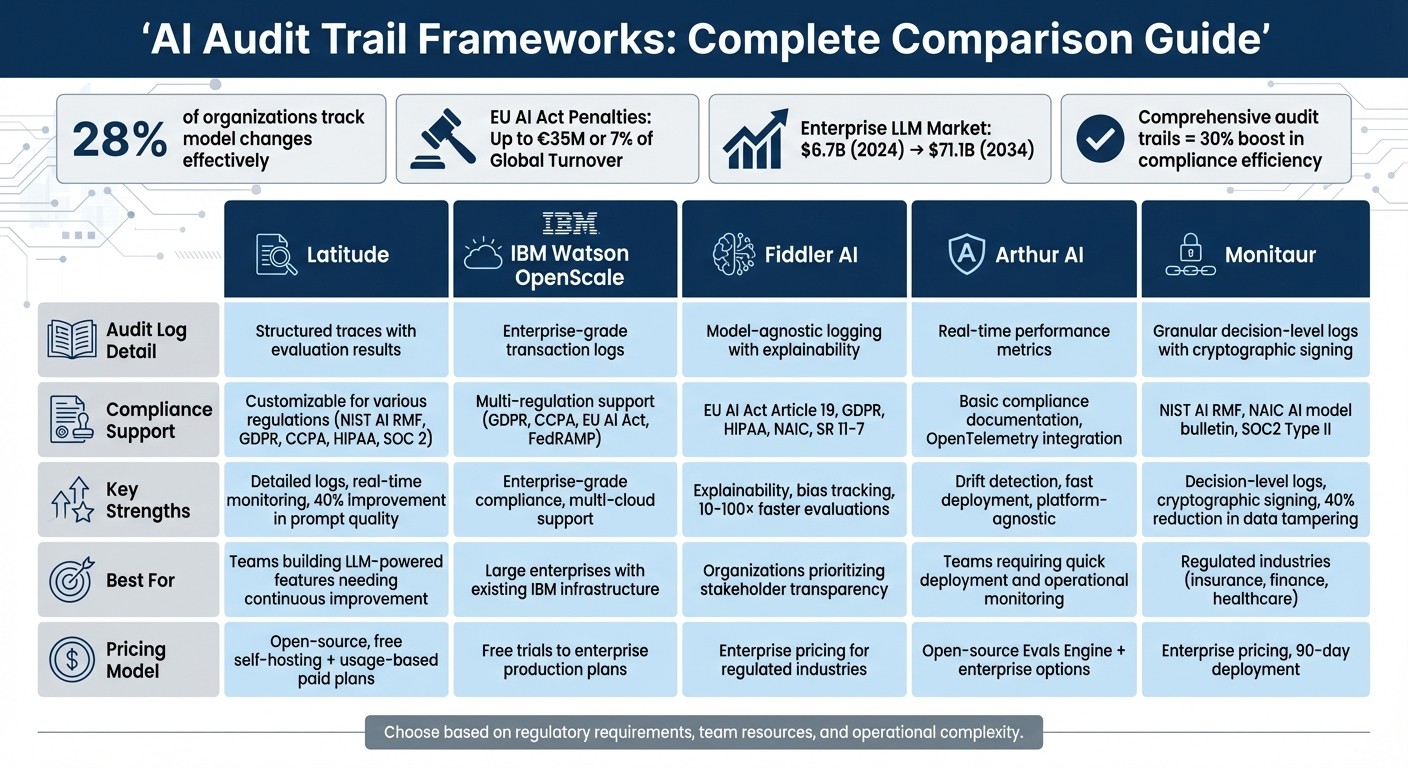

Compare five leading AI audit trail frameworks—logging, monitoring, and compliance features—to choose the right solution for your team's regulatory and operational needs.

César Miguelañez

AI audit trails are now a must-have for legal compliance and operational transparency. From the EU AI Act to GDPR, regulations demand detailed, tamper-proof records of AI system actions. Yet, only 28% of organizations track model changes and decisions effectively, leaving many unprepared for compliance audits.

This guide compares five leading frameworks for AI audit trails: Latitude, IBM Watson OpenScale, Fiddler AI, Arthur AI, and Monitaur. Each offers unique tools for logging, monitoring, and regulatory compliance. Here's a quick breakdown:

Latitude: Open-source, flexible, ideal for small teams needing detailed logs and continuous monitoring.

IBM Watson OpenScale: Enterprise-focused, great for multi-cloud setups and regulated industries.

Fiddler AI: Tailored for explainability and bias tracking, with strong compliance tools.

Arthur AI: Focuses on drift detection and performance monitoring, suitable for diverse AI systems.

Monitaur: Compliance-first, best for industries like finance and healthcare needing strict governance.

Quick Comparison

Framework | Key Strengths | Best For |

|---|---|---|

Latitude | Detailed logs, real-time monitoring | Teams building LLM-powered features |

IBM OpenScale | Enterprise-grade compliance tools | Large enterprises with strict regulations |

Fiddler AI | Explainability and bias tracking | Transparency-focused organizations |

Arthur AI | Drift detection, fast deployment | Teams needing quick operational insights |

Monitaur | Decision-level logs, cryptographic signing | Regulated industries like finance |

Each framework caters to specific needs, from small teams to large enterprises. The right choice depends on your compliance requirements, team resources, and operational complexity. With penalties for non-compliance reaching millions, investing in the right audit trail framework is critical.

AI Audit Trail Frameworks Comparison: Features and Best Use Cases

1. Latitude

Audit Log Granularity

Latitude keeps a detailed record of every AI operation, capturing inputs, outputs, and metadata like model version, temperature settings, and top_p parameters. It also logs system events such as version updates, publishing, and rollbacks. Workflows are broken down into traces (complete operations from start to finish) and spans (individual steps, like database queries or tool calls). This level of detail ensures precise tracking across multi-step processes, offering a tamper-proof record that's especially useful for regulatory audits.

Compliance Integrations

Latitude is designed to align with major regulatory standards. It adheres to the NIST AI Risk Management Framework (AI RMF) and follows the ethical principles of transparency and human oversight outlined in the White House AI Bill of Rights. It also supports compliance with regulations like HIPAA, GDPR, CCPA, and SOC 2.

Monitoring Capabilities

Latitude provides continuous monitoring for both technical performance and quality metrics. On the technical side, it tracks key indicators like response latency (p50, p95, and p99 percentiles), throughput (requests per minute or hour), token usage, and cost-per-request. For quality assurance, its Live Evaluations feature reviews production logs to assess safety, helpfulness, and bias as new data comes in. The platform also identifies performance drift by comparing real-time outputs to historical baselines or using automated evaluators to catch regressions early.

Real-time alerts can be set up to flag issues like latency spikes, token usage anomalies, API failures, or quality score drops. When problems arise, Latitude can automatically block unsafe responses or switch to backup models, ensuring smooth operations. This robust monitoring system supports a wide range of applications.

Suitability for Use Cases

Latitude is ideal for teams that value flexibility and operational transparency. As an open-source platform, it offers free self-hosting options alongside scalable paid plans based on usage, integrations, and agent volume. Organizations using Latitude have reported a 40% improvement in prompt quality and up to an 18.5% increase in logical deduction tasks. Its shared workspaces foster collaboration between product managers and engineers, enabling them to experiment and analyze cost and performance metrics together. This makes Latitude especially beneficial for teams building LLM-powered features in environments where continuous iteration, human feedback, and regulatory compliance are essential.

2. IBM Watson OpenScale

Audit Log Granularity

IBM Watson OpenScale stands out for its detailed compliance and monitoring features. Unlike many other audit trail tools, it tracks transaction-level data for every model decision. This includes inputs, outputs, timestamps, feedback, predictions, and confidence scores. You can access this data through the platform's Transactions page or its Python API [13, 17]. Additionally, it provides explainability insights using methods like LIME, SHAP, and contrastive explanations. The platform's Inspect feature allows users to conduct what-if analysis, offering a deeper understanding of model behavior [14, 15, 17]. Such transparency is particularly valuable during regulatory reviews.

Compliance Integrations

Watson OpenScale is designed to meet stringent regulatory standards. It is FedRAMP-authorized on AWS GovCloud and supports an EU AI Risk Assessment to identify and address compliance risks. It also integrates regulatory requirements from the US, UK, and India [19, 20]. AI Factsheets automatically document model metadata and lifecycle details, and the platform connects with IBM OpenPages for comprehensive risk management [14, 18, 19]. Heather Gentile, Director of Product Management at IBM Data and AI, highlights its capabilities:

"The capability enables watsonx.governance customers to create a repository for logging details throughout a model's lifecycle, such as the rationale behind a certain model choice or which stakeholder had what involvement".

Monitoring Capabilities

Watson OpenScale tracks a range of metrics to monitor Quality, Fairness, Drift, Model Health, and Generative AI Quality. Metrics like ROC area, precision, recall, disparate impact, and drift thresholds are used, with real-time alerts sent via SMTP when evaluations fail [12, 13, 14, 15, 16, 17]. Quality monitoring focuses on model accuracy by comparing predictions to labeled feedback data, using metrics such as the area under the ROC curve (default threshold: 80%) [15, 16, 17]. Fairness monitoring applies the disparate impact method, measuring the ratio of favorable outcomes between monitored and reference groups, with a typical threshold of 80%.

The platform identifies two types of drift: Accuracy Drift, which measures runtime accuracy drops relative to training, and Data Drift, which flags inconsistencies or shifts in data distribution [12, 14]. For optimal drift detection, training data should generally be under 500MB for online training, and drift alert thresholds for classification models should be set at a minimum of 5%. Real-time alerts via SMTP notify users when thresholds are breached. Additionally, the platform evaluates generative AI for safety concerns, such as toxic language, hate speech, abusive content, and profanity in both inputs and outputs.

Suitability for Use Cases

Watson OpenScale is tailored for large enterprises managing multi-cloud models, including those from AWS, Azure, OpenAI, and IBM's own runtime. Its robust compliance and audit capabilities make it particularly suited for regulated industries [11, 19, 20]. The platform offers flexible pricing, ranging from free trials to enterprise-level production plans. Up next, the guide explores another framework designed for explainability and bias tracking.

3. Fiddler AI

Audit Log Granularity

Fiddler AI takes a unique, layered approach to audit logging, offering detailed traceability through multiple stages. Unlike traditional methods, it tracks activity across five key stages for agentic AI: Thought (interpretation), Action (planning), Execution (task performance), Reflection (self-evaluation), and Alignment (safety enforcement). This method ensures a clear connection between visible issues and their underlying causes. Each log includes session IDs, model versions, prompt-response history, token usage, latency, and Trust Service metrics like toxicity and faithfulness. This granular tracking sets Fiddler apart from conventional practices. Krishna Gade, Founder of Fiddler AI, highlights this approach:

"Agentic Observability is not just infrastructure or model telemetry. It is about understanding the full cognitive and operational loop of AI agents in action."

Compliance Integrations

Fiddler integrates seamlessly with major compliance frameworks, including EU AI Act Article 19 logging, GDPR, HIPAA, NAIC, and SR 11-7. Its Fiddler Report Generator (FRoG) simplifies the production of audit-ready Model Risk Management reports while detecting over 35 PII and 7 PHI entity types. Kevin Alvero, Chief Compliance Officer at Integral Ad Science, noted in August 2023 that customizable monitoring and audit evidence generation were crucial during the platform's implementation. For organizations with strict data sovereignty needs, Fiddler also offers air-gapped deployment options.

Monitoring Capabilities

Fiddler provides unified monitoring for traditional ML models, LLMs, and multi-agent systems. For traditional ML applications, it tracks data drift with metrics like Jensen–Shannon Divergence (JSD) and Population Stability Index (PSI), while identifying data integrity issues such as range violations and type mismatches. For LLMs, the platform includes over 14 enrichment metrics to detect issues like toxicity, jailbreaking, and hallucinations. Built on its Trust Service foundation, evaluations are 10–100 times faster, with response times under 100ms. In retrieval-augmented generation (RAG) systems, Fiddler measures Answer Relevance, Context Relevance, and RAG Faithfulness to pinpoint whether failures arise from retrieval or generation. Real-time alerts through Slack, PagerDuty, or email ensure that teams can act quickly when thresholds are breached.

Suitability for Use Cases

Fiddler is tailored for industries with strict regulatory requirements, such as banking, healthcare, insurance, and federal government agencies. Its capabilities address the concerns of 90% of enterprises that prioritize security, trust, and compliance when adopting agentic AI. The platform is particularly effective for managing complex multi-agent systems, which require significantly more monitoring resources - 26× more than single-agent applications. For financial institutions, Fiddler's Report Generator automates the creation of Model Risk Management reports mandated by SR 11-7 guidelines. Its unified approach scales effortlessly from simple models to highly intricate agent workflows.

Next, the guide explores Arthur AI to continue comparing audit trail frameworks.

4. Arthur AI

Audit Log Granularity

Arthur AI uses the OpenInference standard to provide detailed tracing, going beyond basic logging methods. Each log includes full prompt and completion data, token usage, costs, and parameter metadata. The platform also visualizes every step an agent takes - from the initial prompt to intermediate reasoning, tool usage, and the final action. As Ashley Nader from Arthur AI puts it:

"Arthur's new capabilities turn your AI systems from a black box into a glass box - one that's observable, optimizable, and governable."

Arthur's monitoring focuses on five main areas: LLM calls (covering prompts, completions, and metadata), tool usage (inputs, outputs, and latency), RAG calls (tracking retrieved or omitted documents), application metadata (like User and Session IDs), and key decision points. For Retrieval-Augmented Generation (RAG) systems, Arthur records retrieval and re-ranking spans for audit purposes. Continuous evaluation logs include the status (pass or fail), a score, the evaluator version, and a detailed explanation of the results.

This comprehensive logging approach supports seamless compliance integrations for the platform.

Compliance Integrations

Arthur integrates with OpenTelemetry, ensuring neutral observability, and automatically instruments popular LLM frameworks such as LangChain, LlamaIndex, and OpenAI. Each evaluation is linked to unique Trace and Annotation IDs, simplifying regulatory reviews. The platform also connects with tools like Google ADK, Mastra, AWS Strands, and CrewAI. Ian McGraw, Forward Deployed Engineer at Arthur AI, highlighted the value of these integrations:

"Collecting detailed traces allowed them to build a behavior dataset of real customer requests, establishing the evidence their buyers needed to move forward."

Monitoring Capabilities

Arthur AI takes its audit functionality further with advanced monitoring tools. Using an "LLM-as-a-judge" approach, the platform evaluates traces against safety and quality benchmarks. It tracks standard metrics like accuracy and confusion matrices, along with GenAI-specific ones such as Tool Selection Accuracy, Response Relevance, latency, and token usage. For traditional machine learning models, Arthur monitors Data Drift and Concept Drift using metrics like KL Divergence, PSI, and Wasserstein distance.

Real-time guardrails flag issues like PII leakage, hallucinations, prompt injections, and toxic language. Additionally, fairness audits are conducted to identify any disparate impacts across protected groups or business segments.

Suitability for Use Cases

Arthur AI is platform-agnostic, integrating with stacks like TensorFlow, SageMaker, and H2O through REST APIs and Python client libraries. It supports both traditional ML (e.g., tabular data) and generative AI (e.g., NLP and Computer Vision). The platform is particularly well-suited for organizations managing multi-step agent workflows or needing forensic evidence to build trust in their systems.

The Arthur Evals Engine, an open-source toolkit for real-time AI evaluation, is freely available, making these capabilities accessible to teams of all sizes. These features empower organizations to meet regulatory requirements and maintain robust audit trails for AI systems.

5. Monitaur

Audit Log Granularity

Monitaur takes automated decision logging to a detailed level, capturing every model decision in a searchable format. This creates a complete transaction history, which is especially useful in sectors like insurance and finance where regulators often demand specific evidence of transactions. The platform gathers governance evidence automatically and organizes it into a centralized library of 33 adaptable controls tailored for high-risk models. By consolidating diverse information into one accessible dashboard, Monitaur simplifies oversight for stakeholders. This robust logging system forms the backbone of its compliance and monitoring features.

Compliance Integrations

Monitaur builds on its detailed logs with a "policy-to-proof" roadmap that aligns with the NIST AI Risk Management Framework and U.S. regulatory standards, targeting industries like insurance and financial services. It supports the NAIC AI model bulletin, already adopted by over half of U.S. states, and adheres to state-specific rules for fair AI use in places like Colorado and New York. Additionally, Monitaur is SOC2 Type II certified.

Michael Rasmussen, GRC Pundit, shared his perspective:

"There are only two solutions in the market that bring the AI governance lifecycle full-circle with operational control monitoring and enforcement of AI use in the organization. And that is Monitaur and IBM."

Monitoring Capabilities

Monitaur offers continuous checks for drift and bias, ensuring models remain compliant and effective. Its "Flight Simulator" feature enables thorough pre-deployment testing, delivering concise scorecards with actionable insights to help meet regulatory standards. The platform also evaluates metrics like disparate impact and equalized odds to reduce proxy discrimination, while connecting technical results to business outcomes. For example, a leading North American insurer used Monitaur to oversee 180 projects, implement over 4,400 controls, and process 9 billion transactions.

Suitability for Use Cases

Monitaur is built to handle the rigorous compliance and operational demands of regulated industries such as insurance (underwriting, claims, fraud), financial services (lending, KYC), healthcare (drug discovery, clinical recruitment), and human resources (talent acquisition, candidate screening). Its model-agnostic framework supports both traditional and advanced AI systems, including Generative and Agentic AI. Organizations can roll out a complete governance program in less than 90 days with Monitaur. One Fortune 200 company reported an 8× increase in AI projects within six months of adopting the platform, all while maintaining compliance. Moreover, Monitaur earned top marks in vision, pricing flexibility, and AI accelerators in the Q3 2025 Forrester Wave report.

Strengths and Weaknesses

Building on the detailed framework reviews above, let’s dive into the strengths and weaknesses of each approach.

Every framework brings its own mix of advantages and trade-offs when it comes to usability, scalability, and compliance.

Latitude shines in integrating observability with workflows for continuous improvement. It allows teams to monitor model behavior, gather structured feedback, and conduct evaluations - all within a single platform.

IBM Watson OpenScale delivers enterprise-grade governance, seamlessly fitting into IBM's ecosystem. It handles complex compliance scenarios across multiple regulations, making it a solid choice for enterprises with rigorous requirements.

Fiddler AI is notable for its model-agnostic design and robust explainability features. These tools make it easier for non-technical stakeholders to grasp AI decisions. Its visual dashboards further simplify oversight and performance monitoring.

Arthur AI focuses on drift detection and performance monitoring. However, organizations in highly regulated sectors like finance or healthcare might need to enhance its capabilities to meet stringent retention requirements, such as 7 years under the EU AI Act or 6 years under HIPAA.

Monitaur emphasizes a compliance-first approach, offering granular decision-level logs backed by cryptographic signing. Financial institutions using blockchain-based audit trails report up to a 40% reduction in data tampering incidents. However, this rigorous compliance framework could complicate operations for teams with less mature governance structures.

Framework | Audit Log Detail | Compliance Support | Monitoring Features | Ideal Use Cases |

|---|---|---|---|---|

Latitude | Structured traces with evaluation results | Customizable for various regulations | Observability + feedback loops + evaluations | Teams building reliable AI products needing continuous improvement workflows |

IBM Watson OpenScale | Enterprise-grade transaction logs | Multi-regulation support (GDPR, CCPA, EU AI Act) | Comprehensive drift, bias, and fairness monitoring | Large enterprises with existing IBM infrastructure |

Fiddler AI | Model-agnostic logging with explainability | GDPR and CCPA compliance | Visual dashboards for drift and performance | Organizations prioritizing stakeholder transparency |

Arthur AI | Real-time performance metrics | Basic compliance documentation | Fast drift detection and anomaly alerts | Teams requiring quick deployment and operational monitoring |

Monitaur | Granular decision-level logs with cryptographic signing | Aligned with major compliance frameworks | Pre-deployment testing and continuous compliance checks | Regulated industries (insurance, finance, healthcare) that demand rigorous governance |

This comparison highlights how each framework balances functionality and compliance, helping you make an informed decision.

Your choice will depend on your regulatory requirements, operational readiness, and available technical resources. The data also underscores the importance of transparency: organizations with comprehensive audit trails see a 30% boost in compliance efficiency, while those lacking transparency face a 30% higher risk of non-compliance. Non-compliance can lead to steep financial penalties, making robust audit trails a critical investment.

Conclusion

Selecting the right audit trail framework boils down to three main considerations: your regulatory requirements, your team's technical expertise, and the complexity of your operations.

For teams working on LLM-powered features that require constant iteration, Latitude's open-source platform simplifies observability, evaluations, and feedback loops. It's a great option for organizations with limited resources.

However, in environments with stricter regulations, stronger cryptographic protections become necessary. Industries like finance and healthcare, for example, often require decision-level log signing with Ed25519 to create tamper-evident audit trails. With the EU AI Act introducing penalties of up to €35 million or 7% of global turnover, having a reliable audit trail isn't just a good idea - it’s a critical investment.

The importance of choosing the right framework is also reflected in market trends. The enterprise LLM market is expected to skyrocket from $6.7 billion in 2024 to $71.1 billion by 2034, with comprehensive audit trails potentially improving compliance efficiency by up to 30%. Victor Ojewale from Brown University captures the essence of audit trails perfectly:

"An audit trail is a chronological, tamper-evident, context-rich ledger of lifecycle events and decisions that links technical provenance with governance records".

To make the best choice, start by evaluating your AI risk profile and then align your framework with specific compliance requirements like GDPR, HIPAA, or the EU AI Act. Remember, even the best framework is only as effective as the way it’s implemented.

FAQs

What should an AI audit trail record, at minimum?

An AI audit trail needs to document essential actions and decisions, such as inputs, outputs, model versions, timestamps, and the entities responsible. It should also provide a detailed context for each operation - covering what the AI did, when it occurred, and the reasoning behind it. Additional metadata, like user interactions, decision justifications, and system changes, should be included. This level of detail supports transparency, accountability, and compliance, making it possible to verify and analyze AI behavior when needed.

How long do we need to retain AI audit logs for compliance?

Retention periods for AI audit logs vary based on regulatory mandates and an organization’s internal policies. Generally, these logs should be stored long enough to satisfy compliance requirements, facilitate audits, and maintain traceability. Depending on the applicable rules - such as GDPR, HIPAA, or other industry-specific standards - this timeframe can span from a few months to several years.

How can we make audit logs tamper-evident without slowing production?

To make audit logs resistant to tampering without disrupting production, consider cryptographic logging techniques. For example, digitally signing each log entry creates a chain of evidence that can reveal any unauthorized changes. Pair this with storing logs in secure, centralized systems to strengthen their integrity and enable ongoing verification. These approaches help maintain security and compliance while ensuring logs remain reliable in real-time, all without compromising operational efficiency.