Test and monitor LLMs for semantic, non-semantic, and temporal OOD shifts using metrics, stress tests, and continuous evaluation.

César Miguelañez

LLMs often fail when encountering inputs they weren’t trained for. Whether it’s slang-filled customer queries or unexpected market data, these out-of-distribution (OOD) scenarios cause models to produce incorrect, overconfident responses. This can lead to reputational damage, broken workflows, or even security risks.

Key challenges include:

Semantic shifts: New, unfamiliar categories (e.g., unsupported intents in chatbots).

Non-semantic shifts: Changes in style or format (e.g., analyzing casual slang instead of formal text).

Temporal shifts: Events or data arising after the model's training cut-off.

To address these issues, you need more than a good model. You need to evaluate the entire system - prompts, retrieval methods, and tools - to ensure reliable performance across unpredictable scenarios.

Evaluation strategies include:

Failure mode analysis: Identify structural errors, task generalization issues, and safety violations.

Metrics: Use faithfulness, answer relevancy, and statistical uncertainty to spot OOD weaknesses.

Dynamic testing: Employ stress tests, live monitoring, and synthetic datasets to catch hidden issues.

Platforms like Latitude streamline this process by combining observability, evaluation, and feedback in one system, ensuring your LLMs stay effective in diverse conditions.

What is Out-of-Domain Robustness in LLMs?

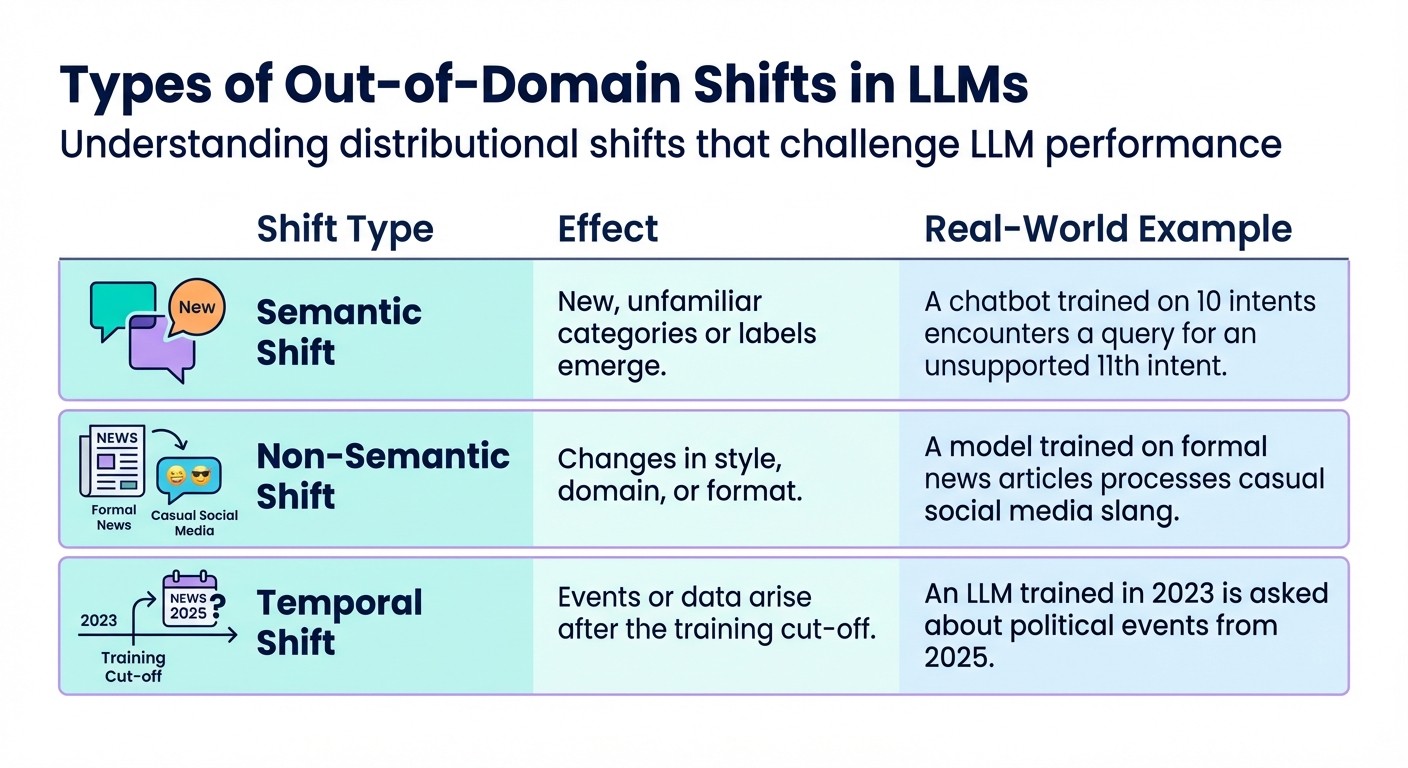

Types of Out-of-Domain Shifts in LLMs: Semantic, Non-Semantic, and Temporal

Defining Out-of-Domain Robustness

Out-of-domain robustness refers to a model's ability to perform well even when the test data differs in statistical patterns from the training data. Traditional machine learning relies on the assumption that training and test data share the same statistical characteristics - this is known as the Independent and Identically Distributed (i.i.d.) assumption. However, as Jiashuo Liu and his co-authors point out:

"Traditional machine learning paradigms are based on the assumption that both training and test data follow the same statistical pattern... in real-world applications, this i.i.d. assumption often fails to hold due to unforeseen distributional shifts."

When this assumption breaks down in real-world settings, models face inputs they were never trained to handle. For example, a customer service chatbot trained on formal, polite language might struggle with slang-filled messages. Similarly, a financial tool built on data from before 2023 may falter when analyzing economic trends in 2025. These unexpected changes, or distributional shifts, are a frequent challenge in production environments.

Understanding these shifts is a critical step before assessing a model's robustness.

Common Out-of-Domain Challenges

Out-of-domain (OOD) challenges can take different forms, each posing unique hurdles for large language models (LLMs) in production. These challenges are generally categorized into three types:

Shift Type | Effect | Real-World Example |

|---|---|---|

Semantic Shift | New, unfamiliar categories or labels emerge | A chatbot trained on 10 intents encounters a query for an unsupported 11th intent |

Non-Semantic Shift | Changes in style, domain, or format | A model trained on formal news articles processes casual social media slang |

Temporal Shift | Events or data arise after the training cut-off | An LLM trained in 2023 is asked about political events from 2025 |

These shifts do more than just lower accuracy - they also lead models to display overconfidence in their predictions for OOD data. This makes it harder to identify when a model is producing incorrect responses. Researchers from Alibaba Group emphasize this challenge:

"OOD detection is still an important task for these LLMs since the world is constantly involving. New tasks may be developed after the knowledge cut-off date"

Unlike traditional software, which often crashes with clear error messages, LLMs fail silently. They produce confident but inaccurate responses, offering no indication that something has gone wrong.

Interestingly, fine-tuned, domain-specific models often excel at handling familiar, in-distribution data. However, LLMs using in-context learning tend to perform better with OOD cases. Achieving robust performance requires not just selecting the right model but also understanding how different approaches handle unexpected inputs.

How to Evaluate LLMs for Out-of-Domain Robustness

Identifying Failure Modes in OOD Scenarios

When evaluating large language models (LLMs) in out-of-domain (OOD) scenarios, it's essential to identify where and how they fail. These failures generally fall into three categories:

Structural breakdowns: These occur when the model generates outputs that don't adhere to required formats. Think of invalid JSON schemas, missing fields, or incorrect formatting like account numbers.

Task generalization failures: These happen when the model struggles to understand your request, especially when using ambiguous or noisy prompts.

Safety violations: These occur when harmful inputs or adversarial prompts bypass the model's safety measures.

A major challenge with LLMs is their tendency to fail silently - errors often go unnoticed unless actively monitored. To tackle this, document recurring issues from production logs and use this information to shape your evaluation strategy. Once you’ve identified potential failure modes, apply specific metrics to measure and address each one.

Evaluation Metrics and Techniques

To effectively evaluate LLMs, it's crucial to use a mix of metrics that address different types of failures. Here’s how to approach this:

Deterministic validators: These are your first line of defense for structural issues. Tools like regex patterns can check email formats, schema validators ensure JSON compliance, and rule-based systems verify required fields. These checks are fast and inexpensive, making them ideal as a starting point.

LLM-as-a-judge: While 67% of AI teams rely on LLMs to score outputs, 93% report challenges with reliability. The biggest issue? Consistency. Identical inputs often yield different scores, which 42.4% of practitioners cite as a major problem. As Jackson Wells from Galileo points out:

"LLM-as-a-judge is just one tool in a much larger toolbox. Elite teams (those achieving 70%+ reliability) don't rely on a single oracle. They build composite evaluation architectures."

For cost-effective alternatives, smaller language models using binary pass/fail questions can achieve 90% accuracy at a fraction of the cost. When using LLM judges, validate their performance against human-labeled datasets using metrics like True Positive Rate and True Negative Rate, similar to how you’d evaluate classifiers.

For retrieval-augmented generation (RAG) systems, break down evaluation into two parts: retrieval (using metrics like Recall@k and Precision@k) and generation (measuring faithfulness and groundedness). This helps pinpoint exactly where OOD failures occur.

Metric | What it Measures | OOD Application |

|---|---|---|

Faithfulness | Ensures answers rely only on sources | Detects hallucinations in unfamiliar domains |

Answer Relevancy | Checks if the response addresses query | Identifies task generalization breakdowns |

Contextual Precision | Ranks relevant documents first | Evaluates retrieval in noisy contexts |

Statistical Uncertainty | Measures variance/entropy in outputs | Flags unreliable OOD generations |

Toxicity/Bias | Detects harmful or unfair content | Crucial for safety in adversarial inputs |

One metric that stands out is statistical uncertainty. Running the same prompt multiple times can reveal high output variance or token prediction entropy - both signs that the model is guessing. Use these insights to set clear thresholds. For example, if human reviewers only approve responses with Faithfulness scores above 0.75, set this as your automated benchmark.

Static benchmarks are helpful but can miss sporadic failures. That’s where dynamic evaluation becomes essential.

Dynamic Evaluation Approaches

Static benchmarks alone can’t catch every issue, especially intermittent failures. Dynamic evaluation, which continuously tests models against changing inputs, fills this gap. Start by creating a "golden dataset" of 50–200 version-controlled test cases. These should include:

Common queries

Edge cases like long or ambiguous inputs

Adversarial examples, such as prompt injections

This dataset serves as your regression test suite for ongoing evaluations.

For critical safety checks, stress testing is key. By running the same prompt at varying temperatures, you can uncover intermittent issues that single-shot tests might miss. To expand coverage further, use LLMs to generate synthetic datasets. These datasets can simulate diverse input variations and adversarial examples, making them particularly useful for rare OOD scenarios.

Method | Best For | Scalability | Data Needed | Complexity |

|---|---|---|---|---|

Batch Testing | Regression and edge case validation | High (Automated) | High (Golden Dataset) | Moderate |

Live Evaluation | Detecting drift and safety issues | High (Continuous) | Low (Production Logs) | Low |

A/B Testing | Real-world user experience | Moderate | High (Live Traffic) | High |

Automated Optimization Loops | Continuous improvement | High | Low (Eval Scores) | Moderate |

Live evaluation is particularly valuable for monitoring production traffic in real-time. It helps catch issues like model drift or safety violations with minimal data requirements. Meanwhile, A/B testing allows you to validate real-world performance directly with users, though it requires significant traffic and careful planning. Finally, automated optimization loops streamline the process by using evaluation scores to refine prompts continuously, reducing the need for manual intervention.

Tools and Workflows for Production Robustness

Using Latitude for Out-of-Domain Robustness

Latitude provides a structured approach to ensure LLMs perform reliably, even in out-of-domain scenarios. By combining observability, evaluation, and continuous improvement, it creates a cohesive workflow.

The observability layer does more than just capture production logs - it incorporates user feedback, offering a way to target specific areas for improvement. Structured feedback from domain experts can uncover issues that automated metrics might miss, ensuring a deeper understanding of failures.

Latitude also uses live monitoring alongside batch testing to evaluate performance continuously. With the "Live Evaluation" feature, new production logs are automatically tested in real time to flag performance regressions. When configuring metrics, you can tag negative indicators - like toxicity or hallucinations - as "negative." This allows the Prompt Suggestions feature to focus on optimizing these areas for improvement. By automating prompt adjustments based on evaluation results, Latitude reduces the need for manual intervention. This workflow ensures that production insights actively enhance LLM performance, rather than just being stored in dashboards.

This integrated system provides a foundation for comparing evaluation methods and ranking the best language models.

Tool Comparison for OOD Evaluations

Let’s explore how Latitude's workflow compares to other evaluation tools and their alignment with production needs.

Platform | Primary Focus | Key Evaluation Methods | Best For |

|---|---|---|---|

Latitude | End-to-end AI engineering workflow | Live monitoring, batch testing, and human feedback | Teams seeking structured observability and continuous improvement |

Custom Scripts | Flexibility and control | Deterministic validators, custom metrics | Engineering teams with specific requirements and resources |

LLM-as-Judge Tools | Automated scoring | Model-based evaluation | Quick assessments where consistency isn't critical |

Latitude excels in offering an all-in-one solution that integrates observation, testing, and optimization seamlessly. By avoiding the need for multiple fragmented tools, it enables faster detection and resolution of issues in out-of-domain scenarios.

Additionally, Latitude's open-source foundation ensures flexibility. You’re not tied to proprietary systems, giving you the freedom to adapt and expand the workflow as your needs change. This adaptability makes it an excellent choice for teams prioritizing long-term robustness.

Best Practices for Production Robustness

Building Continuous Evaluation Pipelines

Creating robust evaluation pipelines involves layering different types of checks. Start with quick, deterministic validators like format checks, JSON schema validation, and PII detection. Then, incorporate heuristic scoring methods that use embeddings and RAG-specific metrics. For more subjective assessments, rely on LLM-as-Judge models or human reviews.

To maintain a clear audit trail, version both prompts and evaluation datasets. When automating scoring, pairwise comparisons - where you determine which of two outputs is better - are usually more reliable than assigning absolute ratings.

Adopt an eval-driven development process by setting up 10–20 test cases that cover both typical and edge scenarios before adding new features. This proactive approach defines success criteria early, reducing the chance of discovering issues after deployment. In fact, systematic evaluation frameworks have been shown to lower production failures by up to 60% while speeding up deployment cycles by a factor of five.

To detect quality issues, track overall score distributions instead of focusing on individual outcomes. Silent quality degradation can go unnoticed until users report problems, so use intelligent sampling to review 1–5% of production traffic. Focus on low-confidence outputs or sensitive topics for human review. Additionally, generate synthetic data to create adversarial scenarios, testing high-risk edge cases that might not appear frequently but could lead to significant issues.

Beyond these technical steps, fostering collaboration across teams is essential to ensure quality and responsiveness.

Cross-Team Collaboration

Strong technical pipelines are only part of the equation; teamwork is just as crucial for tackling out-of-domain challenges. Achieving robustness in unfamiliar domains requires contributions from multiple roles. Engineers focus on instrumentation and infrastructure, product managers set success criteria and prioritize updates, and domain experts bring the nuanced judgment that automated metrics can't replicate. This ensures that outputs align with practical, real-world needs.

Develop workflows where engineers create the data infrastructure, product managers oversee annotation systems, and domain experts validate the outputs. This coordinated effort connects technical capabilities with business goals and specialized knowledge, creating a feedback loop that continuously improves model performance.

Conclusion

Ensuring out-of-domain robustness is a critical factor in differentiating reliable AI products from those prone to significant failures. Large Language Models (LLMs) often fail silently, providing responses that may seem confident but are incorrect, off-brand, or even harmful. Without systematic evaluation, these issues can go unnoticed until users encounter problems or business performance starts to falter.

Real-world production environments are anything but predictable. Users submit ambiguous, adversarial, or edge-case queries that rarely appear in controlled datasets. As highlighted earlier:

"LLM Robustness refers to the LLM's ability to maintain performance, consistency, and reliability across a wide range of prompt conditions, generating accurate and relevant responses regardless of question phrasing types from users".

This robustness is even more critical when LLMs are used as the core decision-making engines in AI-driven workflows. Domain shifts or noisy inputs can disrupt entire processes if not addressed effectively.

To tackle these challenges, implementing a proactive reliability loop is essential. This approach connects evaluation directly to production improvements, turning subjective quality issues into measurable progress. The process involves logging production data, annotating samples with human feedback, identifying recurring failure patterns, and building automated checks to prevent similar issues in the future. Composite evaluation strategies - combining programmatic rules, LLM-as-Judge models for subjective assessments, and human expertise for nuanced cases - help ensure thorough oversight. By linking evaluation data directly to production logs, teams can quickly trace failing scores back to their full execution context, saving valuable time.

Platforms like Latitude simplify this process by integrating observability, evaluation, and collaborative feedback into a single workflow. Instead of juggling disconnected tools, teams can define custom criteria tailored to their domain, enable live monitoring for critical metrics, and curate golden datasets from real-world edge cases. This unified strategy not only addresses hidden quality issues but also transforms them into actionable insights, driving greater reliability in production AI systems.

FAQs

How can I tell if my LLM is failing out-of-domain?

To gauge how well your LLM handles unfamiliar scenarios, focus on measurable factors like accuracy, hallucination rate, relevance, and safety. These metrics provide a clear picture of how effectively your model operates outside its comfort zone.

Use a mix of methods to evaluate performance:

Targeted out-of-domain testing: Deliberately introduce tasks or queries that the model hasn't encountered before to see how it responds.

Human feedback: Gather input from users or reviewers to identify errors, inconsistencies, or areas needing improvement.

Automated evaluations: Implement tools that can systematically measure performance across different metrics.

Keep an eye out for red flags like hallucinations (fabricated or inaccurate information), formatting issues, or responses that don't align with the intended context. These are signs that the model might be struggling.

By combining ongoing monitoring with structured evaluations, you can pinpoint weaknesses and work toward building a more reliable and adaptable model for new challenges.

What’s the fastest way to test OOD robustness before launch?

The fastest way to gauge how well your model handles out-of-domain scenarios before launch is by conducting automated evaluations. This involves using predefined datasets and analyzing live production logs. Structured approaches like batch testing, live evaluation, and A/B testing can help pinpoint potential problems quickly, ensuring the model delivers consistent performance across various situations.

How should I evaluate OOD risk in a RAG pipeline?

To assess out-of-domain (OOD) risk in a Retrieval-Augmented Generation (RAG) pipeline, it's important to focus on two key areas: retrieval accuracy and the grounding of generated answers.

For retrieval accuracy, metrics like Precision@K are essential. These help measure how effectively the system retrieves relevant information from its knowledge base. On the other hand, evaluating the grounding of generated answers ensures that the model relies on retrieved evidence, maintaining faithfulness to the source material.

A combination of automated metrics and manual reviews provides a well-rounded evaluation. Automated tools can quickly flag potential issues, while manual checks help ensure the nuanced accuracy of responses. Building test sets specifically designed for OOD scenarios is another crucial step. These sets simulate conditions the model might encounter in unfamiliar domains, helping to identify weaknesses early.

Finally, consistent performance monitoring over time is key. Regular assessments ensure the system remains reliable, even as it encounters new and unseen domains.