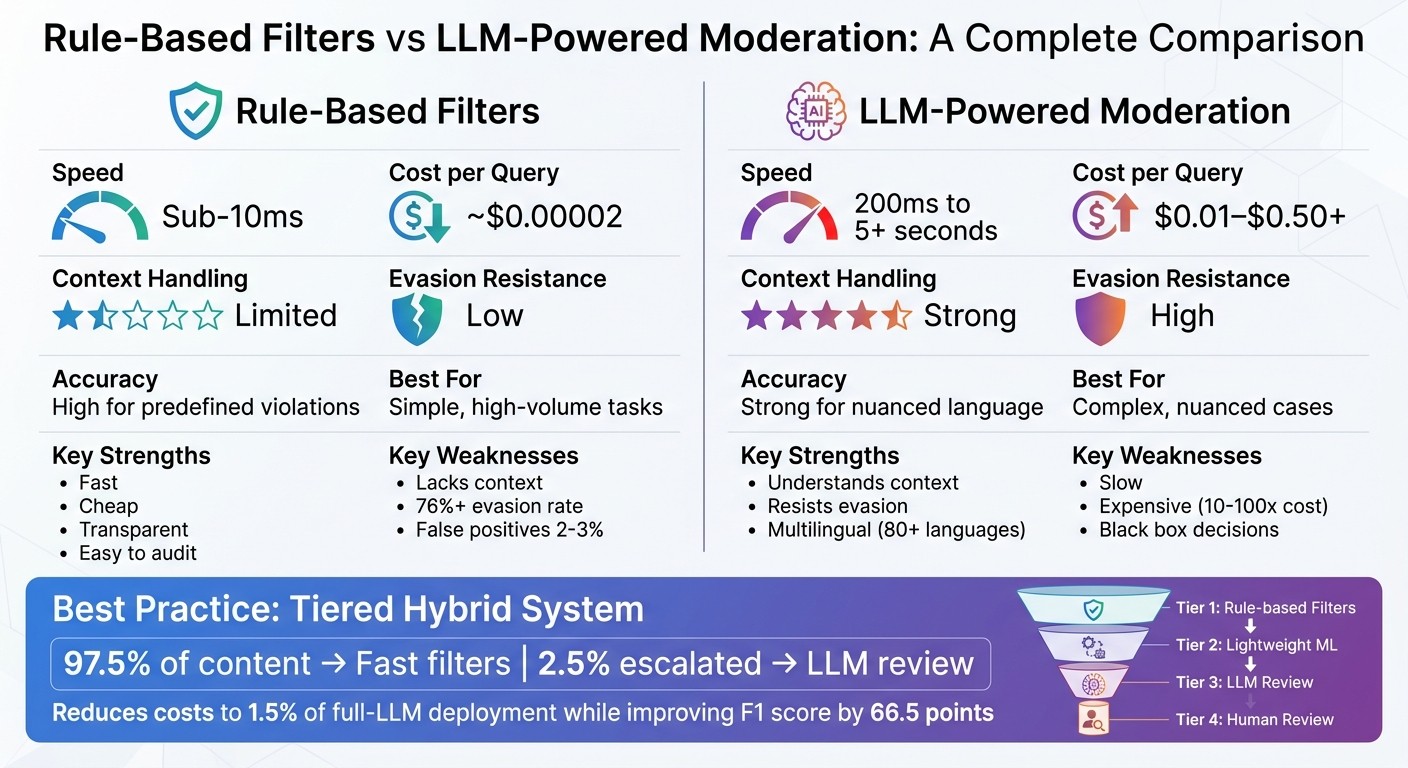

Compare rule-based filters and LLM moderation on speed, cost, accuracy, and why a hybrid tiered approach often works best.

César Miguelañez

Content moderation is critical for online safety, and two key approaches dominate the field: rule-based filters and LLM-powered systems. Here's a quick breakdown:

Rule-Based Filters: Use blocklists, keywords, and patterns to flag violations. They're fast, cost-effective, and easy to audit but struggle with context and evolving language. Common issues include false positives and evasion tactics.

LLMs (Large Language Models): Analyze context and intent, catching nuanced or coded language. They excel in complex cases but are slower, more expensive, and harder to explain.

Key Takeaways:

Speed: Rule-based filters process content in milliseconds, ideal for real-time needs like gaming chats.

Accuracy: LLMs outperform in understanding sarcasm, context, and implicit abuse but can still make mistakes. To minimize these errors, teams should focus on running evaluations on their moderation prompts.

Cost: Rule-based systems are cheaper; LLMs require expensive GPUs or APIs.

Scalability: Rule-based systems handle millions of requests daily; LLMs are better for high-risk, nuanced cases.

Best Use: Combining both systems in a tiered approach balances speed, cost, and accuracy.

Quick Comparison:

Feature | Rule-Based Filters | LLM-Powered Moderation |

|---|---|---|

Speed | Sub-10ms | 200ms to 5+ seconds |

Cost per Query | ~$0.00002 | $0.01–$0.50+ |

Context Handling | Limited | Strong |

Evasion Resistance | Low | High |

Best For | Simple, high-volume tasks | Complex, nuanced cases |

A hybrid system - using rule-based filters for straightforward cases and LLMs for complex ones - maximizes efficiency while reducing costs and risks.

Rule-Based Filters vs LLM Moderation: Speed, Cost, and Accuracy Comparison

How Rule-Based Filters Work

Rule-based filters operate using straightforward if-then rules to scan content against blocklists, keyword databases, and regex patterns. If the content matches any of the predefined rules, the system blocks it immediately. Think of it like a security checkpoint with a strict list of banned items - anything that matches the list gets stopped right away.

These filters rely on techniques like keyword filtering, which compares text to a list of prohibited words, and regex patterns, which are particularly useful for spotting structured data like email addresses, phone numbers, or credit card numbers. Some advanced systems even use phonetic algorithms to catch disguised terms like "h@te" or spaced-out versions such as "h a t e". In many cases, these filters act as the first line of defense, running on edge infrastructure to block obvious violations - like spam templates or banned URLs - before more complex systems step in. This initial layer ensures that straightforward violations are handled quickly, saving time for more nuanced moderation processes.

"The first stage is the least glamorous and does the most work. Regex patterns, exact-match hash databases... and keyword blocklists handle the obvious." - Tian Pan, Engineer-Founder

These systems are incredibly fast, processing messages in less than a millisecond. This speed makes them perfect for real-time platforms, such as live gaming chats or social media networks handling millions of messages every day. Some teams even maintain keyword lists with over 10,000 terms across multiple languages, though managing such extensive lists comes with its own set of challenges.

While these filters are excellent at catching known violations, they are often just the starting point for more sophisticated moderation methods.

Advantages of Rule-Based Filters

Rule-based filters shine in high-volume environments due to their efficiency. With processing times under 10 milliseconds and costs that are 10–100 times lower per message compared to more complex models, they’re ideal for platforms needing rapid, large-scale moderation without relying on expensive GPU infrastructure.

Another major plus is their transparency. Since these systems operate deterministically, their decisions are easy to trace and reproduce. This is particularly helpful for trust and safety teams conducting audits or responding to user appeals. For example, if a message is blocked, the exact rule responsible can be identified, which is crucial for accountability and regulatory requirements.

These filters are also highly effective at detecting predictable patterns, making them ideal for tasks like identifying Personally Identifiable Information (PII). With regex, they can spot Social Security numbers, credit card details, phone numbers, and email addresses. Additionally, they’re great for technical safety checks, such as identifying SQL injection attempts, command injections, or malicious URLs and scam-related phrases.

Drawbacks of Rule-Based Filters

Despite their strengths, rule-based filters have some glaring weaknesses. The biggest issue is their lack of context. For instance, they treat "kill the process" and "kill someone" the same way because they don’t understand semantics. They also fail to pick up on nuances like sarcasm or irony. A mocking statement like "Wow, you're so smart for someone who can't even tie their shoes" might bypass a keyword filter entirely, even though the intent is clear to a human.

Evasion is another major problem. Bad actors can easily bypass these systems using tricks like character substitution (e.g., "h@te"), Unicode homoglyphs that mimic Latin characters, spacing tricks (e.g., "h a t e"), or encoding methods like Base64 and ROT13. These tactics can lead to evasion rates of over 76% against standard keyword-based systems. This creates a constant game of catch-up for moderation teams, who must continually update their blocklists.

The maintenance workload is another downside. As language evolves and new edge cases arise, teams have to manually update rules, which can be both time-consuming and expensive. This work often gets deprioritized. Additionally, these systems are prone to errors like the "Scunthorpe problem", where legitimate content is blocked because it contains a prohibited substring (e.g., blocking "skill" because it contains "kill"). Such issues can result in 2–3% false positives, frustrating users and highlighting the limitations of relying solely on rule-based filtering.

These challenges make it clear that while rule-based filters are useful, they are best used as part of a broader, more comprehensive moderation strategy.

How LLM-Powered Moderation Works

Unlike traditional rule-based filters, moderation powered by large language models (LLMs) brings a deeper understanding of context. Instead of simply flagging specific words or patterns, models like GPT-4 and Llama 2 analyze the entire message to assess intent. These systems use transformer architecture with self-attention mechanisms, which evaluate how each word relates to others in the sequence. This approach allows them to catch subtleties that basic keyword filters often miss.

One standout feature of these models is the policy-as-prompt framework. Instead of retraining models with thousands of labeled examples, moderation policies can be updated by tweaking the instructions given to the model. For instance, in February 2025, Spotify researchers used GPT-4o-mini to moderate over 2,100 user-generated playlist descriptions across categories like hate speech and sexual content. They discovered that structured prompts with bullet points performed more accurately than dense, paragraph-style instructions. This method has gained recognition from experts:

"LLMs can interpret nuanced context, enforce complex policies in natural language, and operate across 80+ languages without separate per-language models."

Alice Hunsberger, Head of Trust & Safety, Musubi

These models are particularly skilled at detecting coded language and implicit abuse that traditional filters often overlook. Using chain-of-thought reasoning, they excel in handling context-heavy, nuanced cases. Moreover, foundational models support moderation in over 80 languages without requiring individual training for each one.

Advantages of LLM Moderation

One of the key strengths of LLMs is their contextual analysis. They don’t just look for banned words - they interpret the intent behind them. For example, they can differentiate between a slur used in an attack versus one quoted in a discussion. That said, they still struggle with this distinction in about 15–20% of cases. Their ability to adapt is another major benefit. Moderation policies can be updated in minutes by modifying prompts, offering flexibility without the need for retraining.

LLMs are also resistant to evasion tactics. Tricks like character substitution, Unicode manipulation, or unusual spacing don’t fool them because they rely on semantic patterns rather than exact matches. Tools like FraudSquad, which use LLM-generated semantic embeddings, have shown a 44% improvement in detecting spam compared to older methods.

Additionally, LLMs can explain their decisions, offering reasoned responses that help build user trust and streamline appeals. Despite these strengths, there are still challenges to address.

Drawbacks of LLM Moderation

One major drawback is latency. Processing a single message can take anywhere from 100 milliseconds to 3 seconds, which makes LLMs unsuitable for real-time applications like gaming chats, where responses need to be nearly instantaneous. To address this, many systems use tiered architectures, reserving LLMs for the most complex cases.

Cost is another concern. Moderation using LLMs can be 10 to 100 times more expensive per message compared to traditional NLP methods. However, by routing 97.5% of safe content through lightweight filters and using LLMs only for the riskiest 2.5% of cases, costs can be reduced to about 1.5% of a full LLM deployment while improving the F1 score by 66.5 points.

Explainability also poses challenges. These models produce confidence scores rather than clear-cut decisions, requiring category-specific thresholds to balance false positives and negatives. False positives can frustrate users, while false negatives can compromise safety. If false-positive rates exceed 2–3%, users may start self-censoring or even leave the platform.

Finally, there’s the risk of automation bias, where human reviewers overly depend on LLM suggestions, potentially overlooking errors. This issue is compounded when models generate "unfaithful" reasoning - explanations that sound logical but are factually wrong. For instance, in a Reddit moderation study, LLMs achieved a 92.3% true-negative rate for identifying non-violating posts but only a 43.1% true-positive rate for complex rule violations.

Rule-Based Filters vs LLMs: Key Differences

When deciding between rule-based filters and LLM-powered moderation, it’s crucial to understand their contrasting strengths. These two approaches differ significantly in accuracy, latency, flexibility, and cost - factors that can heavily influence your choice depending on your operational priorities.

Accuracy and Recall

Rule-based systems are deterministic, meaning they always produce the same output for a given input. They excel at tasks with clearly defined parameters, like detecting credit card numbers via regex or blocking specific slurs. However, they struggle to handle anything outside their predefined rules. If a violation doesn’t match the programmed logic, it simply goes unnoticed.

On the other hand, LLMs employ a probabilistic approach, analyzing entire messages using advanced transformer architectures. This allows them to grasp context and nuance, catching violations that rule-based systems would miss. For example, an LLM can assess whether the phrase "He's just a jogger" is harmless or a veiled racial slur by considering the broader conversation. Rule-based filters, in contrast, lack this contextual understanding and would likely overlook such subtleties.

"LLMs' superiority in accuracy derives from their technical structure and pretraining process, which enable them to better understand complex contexts as well as to adapt to changing circumstances."

Artificial Intelligence Review

Another advantage of LLMs is their ability to function with minimal training data. While traditional machine learning models require thousands of labeled examples to perform reliably, LLMs can deliver results with zero-shot or few-shot prompting.

Dimension | Rule-Based Filters | LLM-Powered Moderation |

|---|---|---|

Accuracy | High for predefined violations | Strong for nuanced or evolving language |

Recall | Limited; misses sarcasm, coded language | High; handles obfuscation and hybrid inputs |

Contextual Depth | Low; focuses on keywords | High; evaluates entire message context |

Output Nature | Deterministic | Non-deterministic |

Auditability | Excellent; fully traceable | Limited; often seen as a "black box" |

Even humans struggle with moderation consistency. A study of 43 U.S. Supreme Court free speech cases revealed dissenting opinions in 60% of rulings, highlighting the inherent subjectivity of moderation. This underscores that no system - human or machine - can achieve perfect accuracy.

Latency and Scalability

When it comes to latency, rule-based filters are unmatched. They process content in less than a millisecond, making them ideal for real-time applications like gaming chats or live streams where delays must stay under 100 milliseconds. These filters run efficiently on standard CPUs, keeping infrastructure costs minimal.

LLMs, however, introduce delays ranging from 200 milliseconds to several seconds per message. For example, LLM Guard 3-8B has a median latency of 800 milliseconds, while BERT-based classifiers can process content on a CPU in 45 milliseconds.

"For sub-100ms latency budgets, such as in gaming or live chat, NLP is the only viable option."

Cost differences are just as stark. Processing 1 million requests costs around $20 with regex filters, while using GPT-4 can range from $3,000 to $10,000. LLM moderation is typically 10 to 100 times more expensive per message than rule-based systems.

To balance these limitations, some platforms use tiered architectures. Quick, rule-based checks handle straightforward violations, while LLMs are reserved for ambiguous cases. However, layering multiple systems can amplify false positives; for instance, five independent filters with 90% accuracy each could result in a 41% system-wide false positive rate.

Dimension | Rule-Based Filters | LLM-Powered Moderation |

|---|---|---|

Latency | Sub-10ms | 200ms to 5s+ |

Cost per Query | Near $0 | $0.01–$0.50+ |

Hardware | Commodity CPU | High-end GPU (A100/H100) |

Scalability | Extremely high (millions/day) | Limited by API/GPU availability |

Best For | Real-time, high-volume, simple tasks | Context-rich, high-risk, nuanced cases |

This trade-off between speed, cost, and complexity often dictates which system is better suited for specific use cases.

Flexibility and Adaptability

Adaptability is another key area where these systems diverge.

Rule-based systems are rigid. They can only address scenarios explicitly programmed into their logic. As language evolves or users find new ways to bypass filters, teams must manually update the rules. Over time, this can lead to rule sprawl, where a system initially designed with 20 rules grows to 2,000, becoming increasingly difficult to manage.

"Rules only handle scenarios someone anticipated. Novel inputs either bypass the system or trigger inaccurate responses."

IdeaPlan

LLMs, by contrast, adapt much more quickly. They can handle new scenarios almost immediately through few-shot prompting, while rule-based systems might require weeks of development. Policy updates can be implemented by simply tweaking a natural language prompt or adding a few examples.

"LLMs can quickly adapt to changes in policy and context without re-annotating the data and re-training the model."

Artificial Intelligence Review

Additionally, LLMs often support over 30 languages out of the box, thanks to their training on diverse datasets. Rule-based systems, however, are usually "English-first" and require separate pipelines for other languages - a labor-intensive process.

Dimension | Rule-Based Filters | LLM-Powered Moderation |

|---|---|---|

Adaptability | Low; manual updates required | High; adjusts via prompt changes |

Handling Novel Cases | No; limited to pre-coded scenarios | Yes; generalizes to unseen inputs |

Maintenance | High at scale (rule sprawl) | Low (prompt engineering) |

Setup Time | Days to weeks | Hours to days |

Data Requirements | None (domain expertise only) | Zero to few-shot examples |

Cost and Explainability

Operational costs further distinguish these systems. Rule-based filters are highly cost-efficient, capable of scaling to millions of messages per day with negligible expenses. LLMs, on the other hand, demand expensive GPUs or API subscriptions, driving up costs significantly.

For 1 million requests, regex filtering costs roughly $20, OpenAI’s Moderation API costs $200, and GPT-4 exceeds $3,000.

Explainability is another major difference. Rule-based systems are fully auditable, as every decision ties back to a specific rule. LLMs, however, operate more like a "black box", providing confidence scores rather than clear-cut decisions. Adjusting these scores requires careful calibration, especially for safety-critical tasks like detecting personal data.

"LLMs' accuracy advantage is significantly discounted by their increased latency and cost in moderating easy cases."

Springer Nature

In safety-critical scenarios like PII detection, a fail-closed strategy (blocking uncertain cases) is often safer. For user-facing applications, however, a fail-open approach (allowing borderline cases) may better preserve user experience. The choice ultimately hinges on balancing safety with usability.

These distinctions highlight the importance of aligning your moderation strategy with your platform's unique demands.

Combining Rule-Based Filters and LLMs

By blending rule-based filters with large language models (LLMs), moderation systems can harness the strengths of both approaches while addressing their weaknesses. This hybrid setup balances speed, cost, and accuracy by using quick filters for clear violations and reserving complex cases for deeper analysis. This tiered approach can slash inference costs to about 1.5% of a fully LLM-based system while boosting F1 scores by 66.5 points. Around 97.5% of safe content is handled by fast, low-cost filters, leaving only the riskiest 2.5% for more expensive LLM evaluations.

Tiered Moderation Strategies

A tiered or "waterfall" architecture breaks moderation into distinct levels, each tailored to different content complexities:

Tier 1: Relies on rule-based methods like keywords, regex, and blocklists to catch obvious violations such as spam, banned URLs, and exact-match slurs. This tier operates with sub-10ms latency.

Tier 2: Uses lightweight machine learning models (1–15 billion parameters) for tasks like detecting personal information (PII) or standard toxicity, with a latency of 10–100ms.

Tier 3: Deploys advanced LLMs for nuanced cases, such as interpreting sarcasm, identifying coded hate, or making complex policy decisions, with latency ranging from 1 to 3 seconds.

Tier 4: Escalates high-stakes decisions to human reviewers for final judgment.

Tier | Technology | Latency | Best For |

|---|---|---|---|

Tier 1 | Keywords, Regex, Blocklists | <10ms | Spam, exact-match slurs, PII patterns |

Tier 2 | Lightweight ML (1B–15B params) | 10–100ms | Category-specific toxicity, jailbreak detection |

Tier 3 | Advanced LLM (LLM-as-Judge) | 1–3 seconds | Sarcasm, nuanced policy, edge cases |

Tier 4 | Human Review | Minutes/Hours | High-impact decisions, legal/cultural context |

Confidence-based routing ensures the right tier handles each request. High-confidence cases are processed automatically - either approved or blocked - while borderline content is escalated to an LLM for further analysis. This design keeps latency low for the majority of requests.

"Content moderation at production scale requires a cascade of systems, not a single one, and the boundary decisions between those stages are where most production incidents originate."

Tian Pan, Engineer-Founder

In 2025, Meta revised its moderation policy for videos by raising the violation threshold for removal from 25% to 50% confidence. This change prioritized reducing false positives, which had been frustrating creators and power users, even though it allowed more false negatives (missed violations).

Fallback and Parallel Modes

To complement the tiered approach, fallback and parallel modes add flexibility and efficiency:

Fallback Mode: Filters operate sequentially, starting with fast rule-based checks. Content escalates to an LLM only if earlier checks are inconclusive. This minimizes latency for straightforward cases while ensuring ambiguous ones get deeper scrutiny.

Parallel Mode: Runs checks for toxicity, PII, and brand safety simultaneously, then combines the results. This reduces overall latency to match the slowest individual check, making it ideal for real-time applications like gaming chat.

"The objective is to execute only the necessary checks as cost-effectively as possible."

Tian Pan, Engineer-Founder

However, false positives can stack up across layers. For instance, if five independent checks each have a 2% false positive rate, the combined chance of blocking legitimate content can climb to nearly 10%. To counter this, thresholds should be adjusted based on context - stricter for sensitive issues like self-harm, looser for less critical matters like profanity.

For streaming content, an asynchronous approach applies fast filters to chunks as they stream, while a full LLM-based review validates the entire response after generation. This ensures a responsive user experience without compromising thorough oversight.

Platforms like Latitude can help teams monitor hybrid systems in action, identify failure points where content is misrouted, and analyze real incidents to prevent similar issues in the future. By grounding AI guardrails in contextual understanding, organizations are projected to cut AI-related incidents by 65% by 2026 compared to those relying solely on rule-based systems.

Production Implementation Guide

To deploy a hybrid moderation system effectively, it's essential to maintain clear visibility into tier performance, misrouted content, and recurring failure patterns. One of the most critical decisions is setting the routing threshold between tiers. Nail this, and you could slash costs to just 1.5% of a naive full-LLM deployment while boosting F1 scores by an impressive 66.5 points. This visibility not only reinforces cost efficiency but also highlights the improved performance metrics from earlier evaluations.

Using Observability Platforms

Observability platforms play a key role in keeping hybrid systems running smoothly. These tools let you track production issues and pinpoint their causes. For instance, Latitude organizes data around failure modes, categorizing them as active, in-progress, resolved, or regressed. This setup helps you monitor trends in moderation accuracy - whether it's improving or slipping.

Latitude's GEPA feature takes things further by generating evaluators based on domain-expert annotations of real failures, ensuring your focus stays on actual production problems. Another critical metric to watch is the appeal overturn rate, which measures how often human reviewers reverse appealed decisions. This metric directly highlights where your classifier might be miscalibrated.

"The appeal overturn rate - what percentage of appealed decisions the human reviewer reverses - tells you directly where your classifier is miscalibrated." - Tian Pan, Engineer-Founder

And here's a key insight: when false-positive rates climb above 2–3%, users often start to self-censor or even leave the platform. That makes keeping these rates low a top priority.

Integration Tools for Hybrid Systems

A variety of tools are available to streamline monitoring and evaluation in hybrid systems. Langfuse, LangSmith, Braintrust, and Helicone are all worth considering. For example:

Langfuse: Offers a user-friendly interface for managing and versioning prompts. It even allows instant rollbacks of moderation rules without requiring a full production deployment.

Helicone: Uses a proxy integration, adding only 20–50 ms of latency per call. It provides a free tier for up to 10,000 requests per month, with additional calls costing $0.0002 each.

Integration methods differ across tools. Helicone relies on a proxy approach, LangSmith uses environment variables, and Langfuse works through SDK decorators or OpenTelemetry. The right choice depends on your team's deployment needs and how much latency you can tolerate. This is especially critical if you're routing 97.5% of safe content through ultra-fast Tier 1 filters (under 10 ms) before escalating to slower LLM checks.

Conclusion

Deciding between rule-based filters and LLM-powered moderation comes down to understanding their strengths. Rule-based systems deliver predictable results at a low cost, making them ideal for simple tasks like detecting spam or enforcing blocklists. They also operate incredibly fast, processing requests in sub-millisecond times. On the other hand, LLMs shine in more nuanced situations, such as understanding context, sarcasm, or edge cases that traditional patterns might miss. However, these benefits come with higher costs and slower processing speeds.

A hybrid approach combines the best of both worlds. By routing the majority of safe content - around 97.5% - through lightweight rule-based filters and escalating only the riskiest 2.5% to LLMs, production teams can cut inference costs to just 1.5% of what a full-LLM deployment would require, while improving F1 scores by 66.5 points. This tiered system is also critical for maintaining user trust. False-positive rates above 2–3% can lead users to self-censor or even leave platforms, making accuracy a top priority.

"Content moderation at production scale requires a cascade of systems, not a single one, and the boundary decisions between those stages are where most production incidents originate." - Tian Pan, Engineer-Founder

These insights lay the groundwork for practical moderation strategies. As outlined earlier, start with rule-based filters for clear-cut cases. Use LLMs as a "judge" for ambiguous scenarios and to create labeled data for training. Over time, these labeled examples can feed into custom ML models that strike a balance: more context-aware than rules but faster and cheaper than LLMs. This layered, contextual AI approach could reduce AI-related moderation issues by 65% by 2026.

The real question isn't about which technology is more advanced - it's about what your specific moderation needs demand. For systems managing millions of daily requests, a hybrid strategy is often the most effective choice.

FAQs

How do I choose the right threshold for sending content to an LLM?

To determine the right threshold, start by dividing content into two categories: easy cases (clear violations or obviously safe content) and hard cases (ambiguous or context-dependent content). For easy cases, set higher thresholds to prioritize speed and accuracy. For hard cases, use lower thresholds to allow for more detailed analysis and, when necessary, user input.

Continuously refine these thresholds by reviewing feedback and performance data. This helps ensure the system remains accurate, transparent, and contextually appropriate over time.

When should I use “fail-closed” vs “fail-open” moderation?

Choosing between fail-closed and fail-open moderation comes down to balancing safety with user experience.

Fail-closed systems block content when there's uncertainty, putting safety first. However, this approach can lead to false positives, where harmless content gets unnecessarily blocked.

Fail-open systems, on the other hand, allow content to go through during uncertainty. While this improves user flow and experience, it increases the risk of harmful content slipping through.

The choice depends on the context. Use fail-closed in environments requiring strict compliance or where safety is paramount, like financial systems or healthcare platforms. Opt for fail-open in less sensitive situations where maintaining a smooth user experience is a higher priority. Always weigh the potential impact of false positives and negatives alongside the system's reliability.

How can I reduce false positives without missing high-risk abuse?

To cut down on false positives while still flagging high-risk abuse, it's important to fine-tune your moderation thresholds based on your platform's specific data. Default settings are designed for general use and may not align with the unique needs of your audience.

Consider implementing a tiered moderation system. Start with lightweight filters to catch clear-cut cases, then escalate more complex or high-risk content to LLM-based moderation tools. This approach strikes a balance between accuracy and sensitivity, helping you reduce false positives while effectively identifying serious risks.