Practical comparison of top LLMs and a testing checklist to prevent regressions, hidden costs, latency issues, and output drift.

César Miguelañez

Switching between large language models (LLMs) isn't as simple as it seems. Each model processes inputs differently, has unique tokenization methods, and varies in cost, speed, and accuracy. This article compares four leading LLMs - GPT-4o, Claude 3.5 Sonnet, Llama 3.1 405B, and Gemini 1.5 Pro - across key performance metrics like accuracy, latency, cost, and context window capabilities.

Key Takeaways:

GPT-4o: Fast response times and cost-effective for high-volume tasks, but struggles with complex reasoning.

Claude 3.5 Sonnet: Excels in coding and multi-step reasoning but has higher costs.

Llama 3.1 405B: Open-source flexibility with strong classification performance, though version updates may cause regressions.

Gemini 1.5 Pro: Handles massive contexts with a 2M-token window but has slower response times.

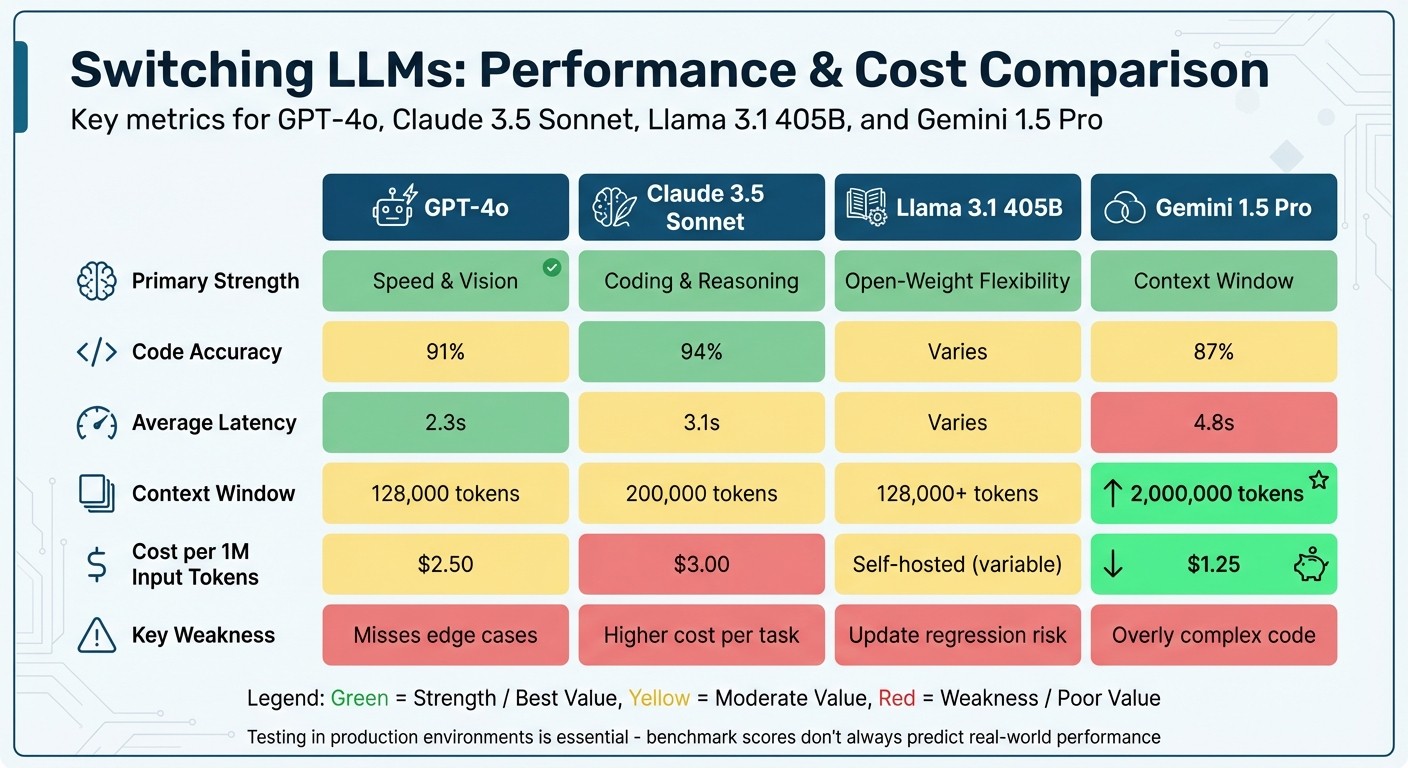

Quick Comparison:

Model | Strengths | Weaknesses | Cost/1M Input Tokens | Context Window |

|---|---|---|---|---|

GPT-4o | Speed, cost efficiency | Misses edge cases | $2.50 | 128,000 tokens |

Claude 3.5 Sonnet | Coding, reasoning | Higher cost | $3.00 | 200,000 tokens |

Llama 3.1 405B | Open-source, classification | Update regressions | Self-hosted | 128,000+ tokens |

Gemini 1.5 Pro | Long-context tasks | Slower response times | $1.25 | 2,000,000 tokens |

Switching models requires careful testing to avoid hidden costs, errors, or performance drops. Tools like Latitude can streamline testing by validating compatibility and ensuring smooth transitions.

LLM Comparison: GPT-4o vs Claude 3.5 Sonnet vs Llama 3.1 405B vs Gemini 1.5 Pro

1. GPT-4o

Accuracy

When evaluating GPT-4o's compatibility, accuracy is a key starting point. The model performs well across various domains, achieving scores like 86–88% on MMLU, 72.4% on SWE-bench Verified for coding, and impressive results on professional exams - 83.1% on USMLE Step 1, 85.39% on CFA Level 1, and 90.91% on SAT Reading & Writing.

However, it does face challenges with complex, multi-step reasoning tasks.

"GPT-4o seems drunk and will ignore important details and just spew out some code... For Claude Opus, I actually often trust it to rewrite my methods correctly."

Raymond Yeh, Founder of Wielded

Despite this, GPT-4o ranked first on the LMSYS Chatbot Arena leaderboard in mid-2024, boasting a 65% average win rate. Still, users have noted occasional inconsistencies when it processes intricate instructions. With accuracy outlined, let’s move on to its performance in interactive settings.

Latency

GPT-4o is designed for quick interactions, making it a solid choice for real-time applications. Its Time to First Token (TTFT) ranges between 150 and 300 ms, and it generates 52–78 tokens per second, depending on the provider. For real-time voice interactions, the model averages a 320 ms response time. Monitoring data from April 2026 shows an average latency of 860 ms, with daily variations spanning 479 ms to 1.3 seconds.

Provider selection plays a significant role in performance. For instance, OpenAI’s API delivers a throughput of 173 tokens/s, compared to Microsoft Azure's 113.3 tokens/s. This makes OpenAI’s API particularly appealing for applications like customer-facing chatbots or real-time code completion tools. Now, let’s take a closer look at the cost.

Cost

At $2.50 per million input tokens and $10.00 per million output tokens, GPT-4o is about 20% more expensive than GPT-4.1, which charges $2.00 and $8.00, respectively. However, the model offers cost-saving options like prompt caching at $1.25 per million tokens - a 50% discount on cached inputs - and a 50% discount through the Batch API for tasks that don’t require immediate responses.

Real-life examples highlight its cost efficiency. PerUnit, for instance, slashed their monthly expenses from $1,200 to $180 by switching to GPT-4o mini for high-volume tagging tasks. Similarly, Leaper developers cut costs by 60% by directing 45% of simpler tasks to cheaper models while reserving GPT-4o for more complex reasoning.

Output Consistency

Over a six-month production period in 2026, GPT-4o maintained an impressive 99.7% uptime. It scored 81.0% on IFEval, showcasing strong performance with straightforward instructions, but only 27.8% on MultiChallenge, indicating struggles with deeply nested or highly complex tasks. Compared to GPT-4.1, GPT-4o takes a more interpretive approach to instruction handling.

The model comes with a 128K token context window, which is standard for production but notably smaller than GPT-4.1’s 1.05M token context window.

2. Claude 3.5 Sonnet

Accuracy

Claude 3.5 Sonnet demonstrates exceptional coding capabilities, consistently surpassing GPT-4o in tasks like code accuracy, refactoring, and debugging. Its performance on HumanEval coding benchmarks ranges from 88.7% to 92.0%, and it achieved 88.7% on MMLU for undergraduate-level knowledge tests. In real-world production scenarios, it delivered 94% accuracy, outpacing GPT-4o's 91%.

The model also shows a 40% improvement in reasoning over the Claude 3 family, averaging 6.8 reasoning steps with improved self-correction. It stands out in following complex, multi-step instructions, achieving over 95% task completion accuracy while maintaining structure - an area where competitors often fall short. In a test of 100 identical coding tasks conducted by Techiesline, Claude 3.5 Sonnet achieved 94% code accuracy, excelling in creating accessible React components, while GPT-4o struggled with API integration edge cases.

"Claude 3.5 Sonnet writes code we'd feel confident deploying tomorrow."

Line Techies

Latency

When it comes to speed, Claude 3.5 Sonnet operates at twice the speed of Claude 3 Opus, with a 67% reduction in time-to-first-token. In March 2026, Ian Paterson, a tech executive, tested 15 LLMs on 38 real-world production tasks. Claude 3.5 Sonnet achieved a median response time of 4.6 seconds and a flawless 100% pass rate. This balance of strong reasoning capabilities and mid-tier speed makes it ideal for context-sensitive tasks and multi-step workflows.

Cost

Claude 3.5 Sonnet offers impressive cost efficiency, priced at $3.00 per million input tokens and $15.00 per million output tokens - an 80% cost reduction compared to Claude 3 Opus, along with improved performance. In July 2024, Asana's LLM Foundations team tested the model on their Work Graph. It achieved a 90% success rate on their tool-use benchmark (up from 76% with Claude 3 Sonnet) and passed 78% of their internal unit tests for handling long contexts. These results prompted Asana to adopt Claude 3.5 Sonnet as their default AI workflow agent.

"Claude 3.5 Sonnet is 67% faster than its predecessor, and is simply the best model at writing and finding insight in our data that we've tested."

Bradley Portnoy, Asana

Output Consistency

Claude 3.5 Sonnet excels in maintaining complex conditional logic, an area where other models often struggle. With a 200,000-token context window, it performs exceptionally well in "needle-in-a-haystack" tests, surpassing GPT-4 in cross-referencing details across lengthy documents of 50+ pages. The model earned a 4.8/5 overall score and was rated the top general-purpose AI model for professional tasks in early 2026. However, some users report it can be overly verbose for simpler tasks and occasionally too cautious due to strict safety filters. Despite these minor drawbacks, its strong performance in instruction following and handling complex contexts makes it a standout choice in cross-model compatibility testing frameworks.

3. Llama 3.1 405B

Accuracy

Llama 3.1 405B stands out as the first open-source model to compete directly with top-tier closed-source options like GPT-4o and Claude 3.5 Sonnet. On classification tasks, it matched Gemini 1.5 Pro with a 74% accuracy rate, surpassing both Claude 3.5 Sonnet (70%) and GPT-4o (61%). Notably, it achieved the highest F1 score of 77.97% in customer ticket classification, showcasing a strong balance between precision and recall.

"For the first time since the first capable LLM model was released, we have an open sourced model (Llama 3.1 405b) that can rival the best closed sourced models."

In math-related tasks, the model performed well with 79% accuracy, equaling Claude 3.5 Sonnet and Gemini 1.5 Pro, though it fell short of GPT-4o's 86%. For reasoning tasks, it scored 56%, outperforming Claude 3.5 Sonnet (44%) but trailing GPT-4o (69%) and Gemini 1.5 Pro (64%). However, researchers from UC San Diego and Apple noted a potential drawback: "negative flips", where previously correct answers become incorrect after updates. By using compatibility adapters, these issues can be reduced by up to 40%. With these accuracy benchmarks in mind, latency performance becomes the next critical factor.

Latency

Latency depends significantly on the provider. Microsoft Azure leads with the fastest time-to-first-token (TTFT) at 1.97 seconds. Meanwhile, Amazon Bedrock's latency-optimized setup offers a TTFT of 2.15 seconds and a throughput of 74.0 tokens per second. For a 500-token response, Amazon Bedrock's latency-optimized configuration completes in just 8.91 seconds - considerably faster than Azure's 19.09 seconds, despite Azure's better TTFT. On the other hand, Amazon Bedrock's standard configuration has a slower TTFT of 8.14 seconds but comes with lower costs. These variations make it essential to weigh latency against token pricing.

Cost

Llama 3.1 405B provides a clear cost advantage with competitive pricing across providers. Amazon Bedrock Standard offers the best deal at $2.40 per 1M tokens (based on a blended 3:1 input/output ratio), while its latency-optimized version costs $3.00 per 1M tokens. Microsoft Azure is more expensive, charging $8.00 per 1M tokens ($5.33 for input and $16.00 for output). These rates are still lower than GPT-4o's pricing of $5.00 per 1M input tokens and $15.00 per 1M output tokens. Additionally, Llama 3.1 405B is efficient, using only 3.9M tokens compared to the 8.0M token average for its class, as reported by the Artificial Analysis Intelligence Index. While cost efficiency is a strong point, output consistency remains a crucial aspect of the model's overall performance.

Output Consistency

With an upgraded 128K token context window - far surpassing the 8K window of earlier versions - Llama 3.1 405B is well-equipped to manage lengthy documents effectively. Its consistency is evident in its strong F1 score of 77.97% for classification tasks, making it particularly suitable for applications like spam detection. However, users should be mindful of potential inconsistencies when updating versions, as some use cases may experience regressions. To ensure reliability, performance is typically measured at the P50 (median) level over a 72-hour period to account for infrastructure variability. These factors highlight the challenges and considerations of cross-model compatibility when switching between LLM providers.

4. Gemini 1.5 Pro

Accuracy

Gemini 1.5 Pro features a massive 2-million-token context window, allowing it to handle large datasets with impressive recall. It achieves 100% recall for up to 530,000 tokens, 99.7% recall at 1 million tokens, and still maintains 99.2% recall even with 10 million tokens.

In benchmark testing, the model scored 81.9% on MMLU, surpassing GPT‑4 Turbo's 80.48%. For math-specific tasks, it achieved 91.7% on GSM8K and 86.5% on the advanced MATH benchmark. When it comes to code generation, it outperformed GPT‑4 Turbo with an 84.1% score on HumanEval, compared to GPT‑4 Turbo's 73.17%. It also excels in multimodal tasks, scoring 63.0% on VATEX, significantly ahead of GPT‑4 Turbo's 56.0%. For tasks requiring complex, multi-step reasoning, Gemini 1.5 Pro is the go-to choice.

Latency

Gemini 1.5 Pro prioritizes advanced reasoning and handling extensive contexts over speed. Its Mixture-of-Experts (MoE) architecture is optimized for tasks like logical reasoning and code generation, but latency increases with input complexity. For example, processing 50 images can take up to 60 seconds. If sub-second response times are critical, Gemini 1.5 Flash - a distilled version of Pro - offers faster processing tailored for speed. The model’s Intelligence Index score of 16 (compared to a median of 10 for similar models) reflects its emphasis on capability over speed. These latency factors also influence pricing considerations.

Cost

Gemini 1.5 Pro uses tiered pricing, with costs doubling for prompts exceeding 128,000 tokens. Standard rates are $1.25 per million input tokens and $5.00 per million output tokens, but for larger contexts, these increase to $2.50 and $10.00, respectively. However, Google reduced these prices significantly in late 2024 - by 64% for inputs and 52% for outputs. For large-scale tasks, the Batch API provides a 50% discount on asynchronous operations like nightly summarizations. Additionally, Context Caching can cut input costs by up to 90% by storing frequently accessed datasets (e.g., product catalogs) at a rate of $4.50 per million tokens per hour.

Output Consistency

Thanks to its extensive context window, Gemini 1.5 Pro can process around 1,500 pages of text or 30,000 lines of code in a single prompt, ensuring consistent output for large datasets. Its multimodal capabilities - handling text, images, audio, and video - make it reliable for complex workflows. The Context Caching feature further improves consistency by ensuring that frequently referenced documents produce stable outputs across multiple requests. However, the model’s maximum output token limit is capped at 8,192, which may require breaking longer responses into chunks.

Comparison Summary

To make an informed decision when switching between large language models (LLMs), it's important to weigh each one's strengths and trade-offs. Here's a concise comparison highlighting key metrics like performance, cost, and capabilities.

Claude 3.5 Sonnet stands out in coding accuracy and reasoning, achieving an impressive 94% accuracy on real-world tasks and identifying 93% of production bugs. In comparison, GPT-4o scores 87% in similar scenarios. However, this high technical performance comes at a higher cost - approximately $0.0052 per task versus GPT-4o's $0.0031.

GPT-4o shines in speed and multimodal functionality, offering a quick 2.3-second response time. With a 128,000-token context window and a cost of $2.50 per million input tokens, it's ideal for rapid prototyping and high-volume tasks. That said, it occasionally misses edge cases that Claude effectively handles.

Gemini 1.5 Pro is the go-to choice for tasks requiring massive context, with its 2,000,000-token window - far surpassing Claude's 200,000 and GPT-4o's 128,000 tokens. It's also the most budget-friendly option at $1.25 per million input tokens, making it well-suited for retrieval-augmented generation and video analysis. On the downside, it has a slower response time and sometimes generates overly intricate code.

Llama 3.1 405B offers flexibility with open-weight hosting, eliminating marginal hosting costs. However, it has a known issue with "negative flips", where updates can cause previously correct predictions to fail. This makes rigorous compatibility testing essential when switching between versions.

Here’s a quick side-by-side comparison of these models:

Model | Primary Strength | Code Accuracy | Avg. Latency | Context Window | Cost per 1M Input | Key Weakness |

|---|---|---|---|---|---|---|

GPT-4o | Speed & Vision | 91% | 2.3 s | 128,000 tokens | $2.50 | Misses edge cases |

Claude 3.5 Sonnet | Coding & Reasoning | 94% | 3.1 s | 200,000 tokens | $3.00 | Higher cost per task |

Gemini 1.5 Pro | Context Window | 87% | 4.8 s | 2,000,000 tokens | $1.25 | Overly complex code |

Llama 3.1 405B | Open-Weight Flexibility | Varies | Varies | 128,000+ tokens | Self-hosted | Update regression risk |

This comparison emphasizes the critical role of automated testing tools, like those provided by Latitude, to ensure smooth transitions and compatibility when deploying different LLMs in production.

Automated Testing with Latitude

Switching between large language models (LLMs) often comes with compatibility hurdles. Latitude's open-source platform helps tackle these issues early by focusing on observability, evaluation, and feedback during LLM migrations. This testing framework builds on earlier insights into model performance metrics.

Latitude uses a three-tier evaluation system to validate model compatibility at various levels. First, deterministic rules handle straightforward checks like validating JSON formats or ensuring character limits are met. These rules are quick and cost-effective. Next, model-based evaluations assess more complex qualities, such as tone and coherence, using tools like GPT-4 or Claude-3. After these initial checks, Latitude incorporates both automated and human reviews to deepen the analysis. For critical use cases, Human-in-the-Loop reviews bring in expert oversight to catch nuanced issues that automated systems might overlook.

For example, if you're migrating from GPT-4o to Claude 3.5 Sonnet, the process begins by defining quality standards and acceptance criteria tailored to your application. Latitude's batch mode lets you run parallel tests for both models against a "golden dataset", making it easier to spot regressions and address potential problems early.

Latitude also provides composite scores that combine results from all evaluation methods, offering a clear summary of model performance. You can flag problematic traits, like toxicity or hallucinations, as "negative" within the platform to fine-tune overall scores. The system even offers prompt suggestions based on evaluation data, helping you adjust and improve your setup for the new model.

Additional features include live evaluations to monitor performance drift in production, A/B testing to compare models under real-world conditions, and optimization loops to refine prompts based on performance metrics. These tools work hand-in-hand with the performance benchmarks previously discussed, ensuring quality standards are upheld across models like GPT-4o, Claude 3.5 Sonnet, Gemini 1.5 Pro, and Llama 3.1 405B.

Conclusion

Migrating to a large language model (LLM) isn't as simple as flipping a switch. Each model has its own strengths and weaknesses, which only become clear through thorough testing in real-world scenarios. For instance, GPT-4o excels with shorter contexts, while Claude 3.5 Sonnet performs well with extended ones. Gemini 1.5 Pro shines in tasks requiring long-context understanding, and Llama 3.1 405B offers a budget-friendly, open-source option. Interestingly, public leaderboards often fail to predict actual production performance accurately - correlations are below 60%. This makes rigorous, continuous testing a non-negotiable step.

"You have to test it to know it. Benchmarks and model documentation are useful, but the only reliable way to evaluate a model is to run it through your own system, prompts, and datasets."

Nate Lee, ML Engineer, Thoughtworks

The diverse capabilities of these models highlight the importance of production-based testing. A structured, gate-based approach can simplify selection: start by eliminating models that don't meet quality, latency, or reliability standards, then focus on cost considerations. Testing should involve 50–200 real-world examples from production traffic rather than synthetic data. To standardize results, use consistent prompt templates and fixed inference settings. For tasks like PII detection or routing, aim for at least 90% accuracy.

"The best LLM today may not be the best next quarter."

Trismik

Choosing the right LLM is an ongoing process, not a one-time decision. Latitude's open-source testing framework supports this by enabling continuous evaluation and optimization. It combines automated and human feedback, integrates with CI/CD pipelines for regression testing, and provides robust observability in production. Features like Live Mode track performance drift caused by silent model updates, while automated loops refine prompts based on real-world usage. Adopting this production-first testing mindset ensures your model selection evolves with changing performance and cost demands.

FAQs

What tests should I run before switching LLMs?

Before transitioning to a new large language model (LLM), it's important to run a series of tests to ensure it aligns with your requirements and performs as expected. Here's what to focus on:

Evaluate Response Accuracy and Consistency: Check how well the new LLM handles queries, ensuring its answers are precise and reliable across different scenarios. Consistency in responses is just as important as accuracy.

Test Dynamic Prompt Behavior: Assess how the model reacts to various prompt styles and structures. This helps verify its flexibility and adaptability to your specific use cases.

Assess Integration Compatibility: Ensure the new LLM integrates smoothly with your existing systems. Compatibility issues can disrupt workflows, so thorough testing is essential.

Monitor Performance Metrics: Use automated tools to track uptime, response times, and overall reliability. These metrics are key to maintaining a stable and efficient application.

Running these tests allows you to spot potential issues early and confirm the new LLM is a good fit before making the switch. It’s a proactive way to ensure a smooth transition and avoid unexpected setbacks.

How do I prevent regressions from model updates?

To avoid regressions in AI systems, it's crucial to adopt systematic regression testing and continuous evaluation practices. Think of prompts and outputs as you would software: they need careful versioning and regular testing. By running these tests against a "golden set" of high-quality datasets, you can identify and address potential issues before they escalate.

Automated evaluation pipelines, combined with version control, are key tools here. They allow you to compare outputs over time, spot inconsistencies early, and ensure your system remains reliable. Additionally, creating evaluation loops that include clear success criteria and diagnostic tools helps maintain stability while driving long-term improvements.

How can Latitude help validate a model migration?

Latitude helps streamline model migration by offering tools for automated evaluation pipelines. With Latitude, you can test models against datasets or live logs to assess performance. By using quantitative metrics to measure both consistency and accuracy, it ensures the new model meets the necessary reliability and quality benchmarks for production.