Structured user feedback and observability tools reveal real-world failures, balance datasets, and improve fine-tuned model performance.

César Miguelañez

Fine-tuning a model is just the start. The real test is how it performs in real-world scenarios. While synthetic benchmarks evaluate predefined tasks, they miss unpredictable issues that arise in production. That’s where user feedback becomes critical - it reveals problems like tone mismatches, context-specific errors, or unexpected failures that benchmarks overlook.

Key Insights:

User Feedback vs. Benchmarks: Benchmarks test controlled scenarios, but feedback highlights real-world gaps, such as edge cases and nuanced errors.

Research Findings: Studies show models fine-tuned with feedback outperform others, even surpassing human accuracy in specific tasks.

Practical Methods: Teams use structured feedback systems, balanced datasets, and advanced tools to refine models effectively.

Tools for Feedback Integration: Platforms like Langfuse, LangSmith, and Latitude simplify tracing, evaluation, and scaling feedback processes.

Takeaway:

User feedback is essential for improving model performance. By combining structured data collection, targeted reviews, and the right tools, teams can address hidden issues and refine models to meet user needs more effectively.

Research and Case Studies on Feedback-Driven Evaluation

Academic studies and real-world applications highlight how user feedback bridges the gap between theoretical benchmarks and practical performance. This approach often uncovers improvements that controlled tests might overlook.

Research Papers on User Feedback

Studies have shown that models refined using user feedback can even outperform human experts. For instance, in August 2025, researchers introduced the "Dean of LLM Tutors" framework to assess the quality of educational feedback. They fine-tuned a GPT-4.1 model using 2,000 feedback instances labeled by human experts. The result? The model achieved 79.8% accuracy and a 79.4% F1-score, surpassing the human average accuracy of 78.3%.

"The 'Dean of LLM Tutors' framework represents a significant advance in our ability to deliver reliable and high-quality educational feedback to students automatically in a scalable way." - Keyang Qian et al.

This research also explored performance differences between zero-shot and few-shot settings. For example, the o3-pro model achieved 74.3% accuracy in zero-shot scenarios, while o4-mini led with 74.9% accuracy in few-shot labeling. Models using chain-of-thought reasoning excelled at identifying hallucinations, with Gemini 2.5 Pro reporting zero hallucinations. In contrast, smaller models like GPT-4.1 nano struggled significantly.

These findings underline the effectiveness of user feedback in enhancing model performance, even in controlled environments.

Production Case Studies

Real-world applications further validate the impact of feedback-driven approaches. In January 2022, OpenAI introduced InstructGPT, a model with 1.3 billion parameters, fine-tuned using reinforcement learning from human feedback (RLHF). Remarkably, InstructGPT outperformed GPT-3, which has 175 billion parameters, while using less than 2% of the original compute.

"Fine-tuning with human feedback is a promising direction for aligning language models with human intent." - Long Ouyang et al.

From January 2023 to June 2024, Stanford Health Care tested the MedFactEval framework with a panel of seven physicians to establish ground truth for discharge summaries. The automated "LLM Jury" achieved an 81% agreement rate with expert evaluations. This demonstrates that structured human feedback can scale clinical validation to match expert-level performance in critical medical tasks.

These examples make it clear: user feedback not only sharpens model outputs but also drives measurable improvements in real-world applications.

Methods for Collecting and Organizing User Feedback

An effective feedback system often relies on a structured, three-layer framework: Feedback Configs, which define organization-wide standards (like accuracy ratings or binary pass/fail schemas); Rubric Items, which outline task-specific guidelines; and individual Feedback Entries, which log scores and annotations for specific tasks.

This structure helps teams maintain consistency across various evaluation efforts while tailoring methods to specific needs. For example, a customer support team might use a simple pass/fail system to assess response quality, whereas a medical AI team could rely on detailed Likert scales to evaluate clinical accuracy. Let’s explore how feedback validation works and how balanced datasets are built.

Annotation Queues and Feedback Validation

Validation rules are essential for ensuring consistent feedback. For numeric ratings, clear boundaries are defined. Categorical feedback requires at least two distinct labels, while freeform text provides detailed qualitative insights.

Striking a balance between scalable data collection and meaningful insights can be tricky. Quantitative methods, such as binary ratings or Likert scales, are excellent for benchmarking and can be automated for large-scale use. On the other hand, qualitative insights - gathered through iterative reviews - offer deeper understanding but are time-intensive and less scalable.

Creating Balanced Feedback Datasets

Balanced datasets are crucial to avoid biased evaluations. For reference datasets, it’s important to ensure an even distribution of positive and negative labels (e.g., equal numbers of 'pass' and 'fail' entries) to prevent skewed results.

Explicit feedback methods, like star ratings or thumbs up/down, provide clear indicators of user satisfaction but often suffer from low response rates. In contrast, implicit feedback, such as user behaviors like copying outputs, retrying queries, or abandoning tasks, generates higher volumes of data. However, interpreting this data requires more advanced analysis techniques.

Platforms for User Feedback Integration

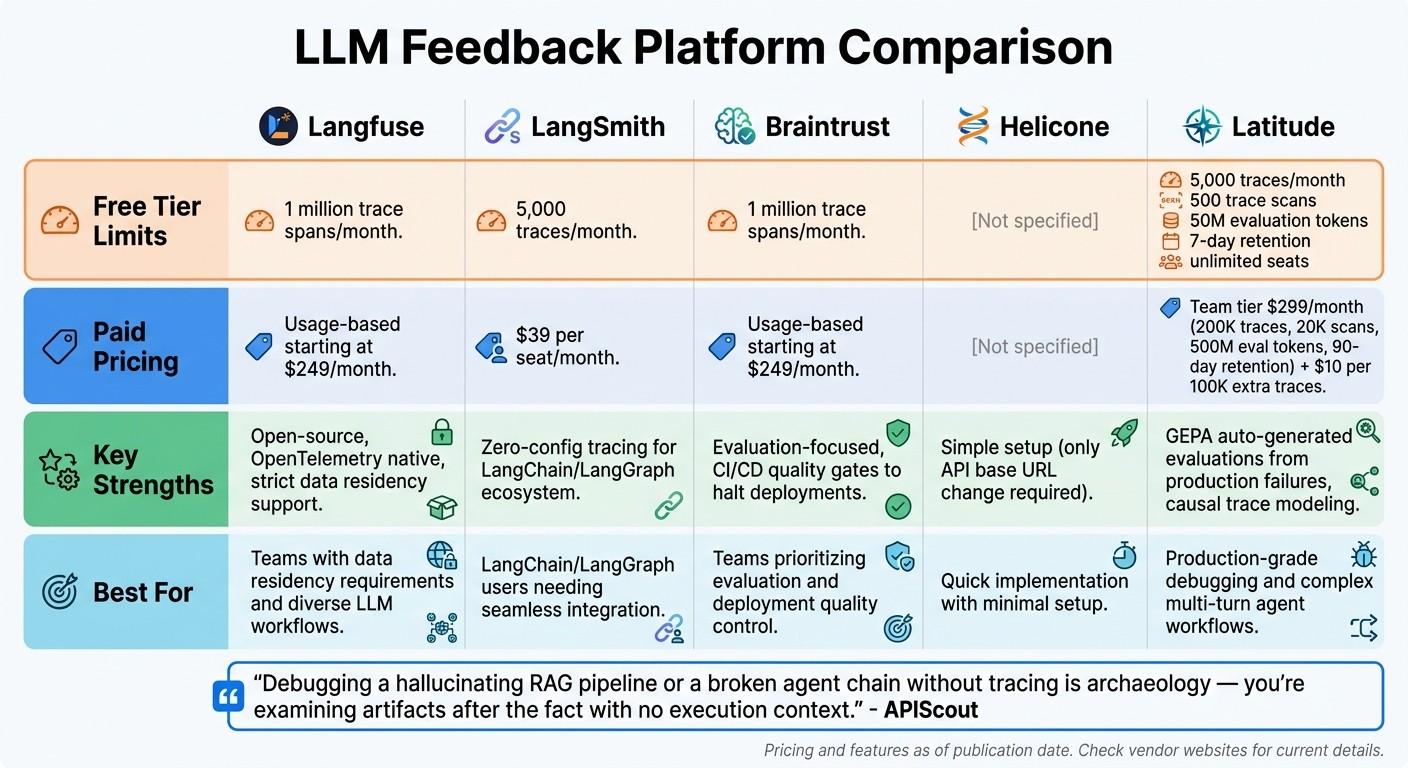

LLM Feedback Platform Comparison: Features, Pricing, and Free Tier Limits

Feature Comparison of Feedback Tools

Choosing the right feedback platform depends on your team’s workflow, technical needs, and priorities. Langfuse and Braintrust stand out with free tiers offering 1 million trace spans per month, while LangSmith provides a more limited free option of just 5,000 traces per month. These differences can be crucial for production teams looking to scale.

For teams working within the LangChain or LangGraph ecosystem, LangSmith offers seamless zero-configuration tracing with minimal setup. However, using it with alternative frameworks can lead to higher integration costs and potential lock-in. On the other hand, Langfuse, an open-source leader, supports OpenTelemetry natively and integrates with a variety of LLM workflows, making it a great choice for teams with strict data residency needs. Meanwhile, Braintrust focuses on evaluation, enabling teams to halt deployments if quality scores drop - a valuable feature for maintaining reliability through CI/CD gates. Helicone simplifies tracing by requiring only a change to your API base URL, making it an easy entry point.

"Debugging a hallucinating RAG pipeline or a broken agent chain without tracing is archaeology - you're examining artifacts after the fact with no execution context." - APIScout

Pricing varies significantly. LangSmith charges $39 per seat/month, which can add up for larger teams with lower trace volumes. In contrast, Langfuse and Braintrust offer usage-based pricing starting at $249/month, better suited for teams handling high-volume workloads.

Latitude: AI Observability and Evaluation Platform

Some platforms go beyond basic feedback tools to include advanced observability features, and Latitude stands out by offering production-grade diagnostics. This platform doesn’t just treat LLM calls as isolated events; it models execution as a causal trace of dependent steps, making it vital for debugging complex multi-turn agent workflows.

A key feature of Latitude is GEPA (auto-generated evaluations), which creates test cases directly from real production failures instead of relying on synthetic benchmarks. This approach helps teams identify and fix regressions before they affect users.

Latitude’s pricing is divided into three tiers:

Free: Includes 5,000 traces per month, 500 trace scans, 50 million evaluation tokens, and 7-day retention, with unlimited seats.

Team: Costs $299/month and scales to 200,000 traces, 20,000 trace scans, 500 million evaluation tokens, and 90-day retention. Overage charges are $10 per 100,000 extra traces.

Enterprise: Offers custom pricing with features like on-premises deployment, role-based access control, SOC2 and ISO27001 compliance, SAML SSO, and dedicated support.

Latitude also supports self-hosting for free, giving teams full control over their data, which is especially appealing for those with stringent security or compliance requirements.

Common Challenges and Solutions

Filtering Noisy Feedback

User feedback can be messy. Some users submit vague complaints, others misinterpret issues, and a portion of feedback might not even relate to the model's actual performance. To cut through this noise, teams need a clear strategy for analyzing errors.

One effective method is a structured four-step process using "open coding." This involves starting with a wide range of production traces and applying raw, descriptive labels to each failure without any predefined categories. By taking this bottom-up approach, unique failure patterns naturally emerge. Once these patterns are identified, teams can measure their frequency and impact to prioritize fixes.

Deciding between rating systems and iterative reviews depends on your specific needs. Rating systems are great for scalable, automated monitoring, while iterative reviews, though time-intensive, provide a deeper understanding of edge cases. Many teams combine both approaches: automated scoring for ongoing monitoring and manual reviews to dig into outliers.

Once the noise is filtered out, the next challenge is ensuring the dataset is balanced for a more reliable model evaluation.

Fixing Dataset Imbalances

After cleaning up noisy feedback, the focus shifts to addressing dataset imbalances. Feedback datasets often lean heavily toward common use cases, leaving rarer scenarios underrepresented. This imbalance can hurt model performance when dealing with those less frequent but critical edge cases. Tackling this issue involves two main strategies: data-level and algorithm-level adjustments.

Data-level methods focus on modifying the dataset itself before training. For example:

Oversampling duplicates minority examples to balance the dataset, though it can lead to overfitting.

Undersampling reduces the size of the majority class, which speeds up training but risks losing valuable data.

Synthetic data generation creates diverse examples by techniques like paraphrasing, back-translation, or using large language models (LLMs) to produce niche scenarios.

On the other hand, algorithm-level methods tweak the learning process to better handle imbalances. These include:

Cost-sensitive learning, which assigns higher penalties for errors on underrepresented classes.

Focal loss, which zeroes in on harder-to-classify cases.

Each method has its pros and cons. For instance, oversampling can boost recall for minority classes but may slow down training, while undersampling is computationally efficient but might lower overall accuracy. Teams often combine strategies - like pairing synthetic data generation with cost-sensitive learning - to strike the right balance between performance and efficiency.

Wrapping Things Up

Key Takeaways

User feedback plays a central role in evaluating and improving models. Without it, teams often miss the mark when it comes to identifying failures in practical, everyday use. The research and examples shared here highlight how successful teams blend quantitative metrics for broad monitoring with qualitative reviews to address nuanced edge cases. The magic lies in creating a systematic approach that transforms raw user input into meaningful updates. By applying these strategies, you can keep refining your model and stay ahead of potential issues.

Practical Steps Moving Forward

Ready to put these ideas into action? Here are some steps to help you kick-start a feedback-driven improvement process:

Log everything. Track every request, response, prompt version, and context. This data is essential for debugging and replaying interactions effectively. Think of it as the foundation for all future improvements.

Set up a consistent review routine. Dedicate 30 minutes every two weeks to review feedback trends and decide on specific changes to prompts or retrieval strategies. Regular tweaks like this can lead to steady, meaningful progress.

Adopt an "Evaluator-Optimizer" system. Use one LLM to generate responses and another to provide feedback for refinement. This creates a self-improving loop, cutting down on the need for constant human oversight.

Choose the right tools. Whether you go with Latitude for structured observability or platforms like Langfuse or LangSmith, the goal is the same: establish a clear process for gathering feedback, running evaluations, and tracking improvements over time.

FAQs

What feedback should we collect from users?

Collect both explicit and implicit feedback from users to understand their experience and improve. Explicit feedback includes direct inputs like ratings (e.g., thumbs up/down) or written comments, giving straightforward insights into user satisfaction. On the other hand, implicit feedback is drawn from user behavior, such as how long they engage with the content or the number of retries they make. While this type of feedback reveals patterns in usage, it often requires interpretation to extract meaning.

To make this feedback actionable, organize it into measurable metrics - like rubrics or rating scales. This approach helps transform subjective opinions into concrete data that can inform improvements in LLM performance, safety, and alignment.

How do we turn messy feedback into training data?

To turn unorganized feedback into useful training data, begin by annotating and structuring it to uncover actionable insights. Incorporate both explicit signals (like thumbs-up or thumbs-down ratings) and implicit signals (such as retry rates) to develop measurable metrics, including accuracy and relevance. By converting subjective feedback into clear, structured data, you can effectively guide model improvements and enhance performance over time.

How can we catch quality regressions before release?

To stay ahead of quality regressions, it's important to use continuous evaluation with real-world data, like production logs. This approach helps spot new failure cases early. Adding structured human feedback can uncover subtle issues, such as biases or hallucinations, that automated systems might miss. By combining these strategies with automated monitoring and feedback loops, you can maintain consistent benchmarks and catch potential regressions before releasing your models.